Chapter: Distributed and Cloud Computing: From Parallel Processing to the Internet of Things : Virtual Machines and Virtualization of Clusters and Data Centers

Virtualization Structures/Tools and Mechanisms

VIRTUALIZATION STRUCTURES/TOOLS AND MECHANISMS

In general, there are three

typical classes of VM architecture. Figure 3.1 showed the architectures of a

machine before and after virtualization. Before virtualization, the operating

system manages the hardware. After virtualization, a virtualization layer is

inserted between the hardware and the operat-ing system. In such a case, the

virtualization layer is responsible for converting portions of the real

hardware into virtual hardware. Therefore, different operating systems such as

Linux and Windows can run on the same physical machine, simultaneously.

Depending on the position of the virtualiza-tion layer, there are several

classes of VM architectures, namely the hypervisor architecture, para-

virtualization, and host-based virtualization. The hypervisor is also known as the VMM (Virtual Machine Monitor). They both perform the same

virtualization operations.

1. Hypervisor and Xen Architecture

The hypervisor supports

hardware-level virtualization (see Figure 3.1(b)) on bare metal devices like

CPU, memory, disk and network interfaces. The hypervisor software sits directly

between the physi-cal hardware and its OS. This virtualization layer is

referred to as either the VMM or the hypervisor. The hypervisor provides hypercalls for the guest OSes and applications. Depending

on the functional-ity, a hypervisor can assume a micro-kernel architecture like the Microsoft Hyper-V.

Or it can assume a monolithic

hypervisor architecture like the VMware ESX for server virtualization.

A

micro-kernel hypervisor includes only the basic and unchanging functions (such

as physical memory management and processor scheduling). The device drivers and

other changeable components are outside the hypervisor. A monolithic hypervisor

implements all the aforementioned functions, including those of the device

drivers. Therefore, the size of the hypervisor code of a micro-kernel

hyper-visor is smaller than that of a monolithic hypervisor. Essentially, a

hypervisor must be able to convert physical devices into virtual resources

dedicated for the deployed VM to use.

1.1

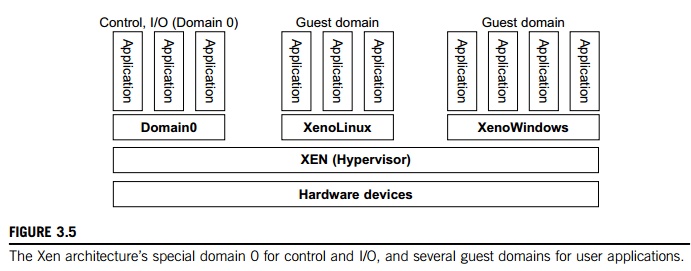

The Xen Architecture

Xen is an open source

hypervisor program developed by Cambridge University. Xen is a micro-kernel

hypervisor, which separates the policy from the mechanism. The Xen hypervisor

implements all the mechanisms, leaving the policy to be handled by Domain 0, as

shown in Figure 3.5. Xen does not include any device drivers natively [7]. It

just provides a mechanism by which a guest OS can have direct access to the

physical devices. As a result, the size of the Xen hypervisor is kept rather

small. Xen provides a virtual environment located between the hardware and the

OS. A number of vendors are in the process of developing commercial Xen

hypervisors, among them are Citrix XenServer [62] and Oracle VM [42].

The core components of a Xen

system are the hypervisor, kernel, and applications. The organi-zation of the

three components is important. Like other virtualization systems, many guest

OSes can run on top of the hypervisor. However, not all guest OSes are created

equal, and one in

particular controls the

others. The guest OS, which has control ability, is called Domain 0, and the

others are called Domain U. Domain 0 is a privileged guest OS of Xen. It is

first loaded when Xen boots without any file system drivers being available.

Domain 0 is designed to access hardware directly and manage devices. Therefore,

one of the responsibilities of Domain 0 is to allocate and map hardware

resources for the guest domains (the Domain U domains).

For

example, Xen is based on Linux and its security level is C2. Its management VM

is named Domain 0, which has the privilege to manage other VMs implemented on

the same host. If Domain 0 is compromised, the hacker can control the entire

system. So, in the VM system, security policies are needed to improve the security

of Domain 0. Domain 0, behaving as a VMM, allows users to create, copy, save,

read, modify, share, migrate, and roll back VMs as easily as manipulating a

file, which flexibly provides tremendous benefits for users. Unfortunately, it

also brings a series of security problems during the software life cycle and data lifetime.

Traditionally, a machine’s lifetime can be envisioned as a straight line where the current

state of the machine is a point that progresses monotonically as the software

executes. During this time, con-figuration changes are made, software is

installed, and patches are applied. In such an environment, the VM state is

akin to a tree: At any point, execution can go into N different branches where multiple instances of a VM can exist at any

point in this tree at any given time. VMs are allowed to roll back to previous

states in their execution (e.g., to fix configuration errors) or rerun from the

same point many times (e.g., as a means of distributing dynamic content or

circulating a “live” system image).

2. Binary Translation with Full

Virtualization

Depending on implementation

technologies, hardware virtualization can be classified into two cate-gories: full virtualization and host-based virtualization. Full virtualization does

not need to modify the host OS. It relies on binary translation to trap and to virtualize

the execution of certain sensitive, nonvirtualizable instructions. The guest

OSes and their applications consist of noncritical and critical instructions.

In a host-based system, both a host OS and a guest OS are used. A

virtuali-zation software layer is built between the host OS and guest OS. These

two classes of VM architec-ture are introduced next.

2.1

Full Virtualization

With full virtualization,

noncritical instructions run on the hardware directly while critical

instructions are discovered and replaced with traps into the VMM to be emulated

by software. Both the hypervisor and VMM approaches are considered full

virtualization. Why are only critical instructions trapped into the VMM? This

is because binary translation can incur a large performance overhead.

Noncritical instructions do not control hardware or threaten the security of

the system, but critical instructions do. Therefore, running noncritical

instructions on hardware not only can promote efficiency, but also can ensure

system security.

2.2

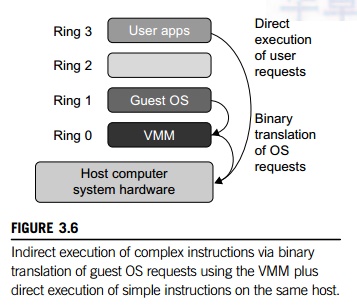

Binary Translation of Guest OS Requests Using a VMM

This approach was implemented

by VMware and many other software companies. As shown in Figure 3.6, VMware

puts the VMM at Ring 0 and the guest OS at Ring 1. The VMM scans the

instruction stream and identifies the privileged, control- and

behavior-sensitive instructions. When these instructions are identified, they

are trapped into the VMM, which emulates the behavior of these instructions.

The method used in this emulation is called binary translation. Therefore, full

vir-tualization combines binary translation and direct execution. The guest OS

is completely decoupled from the underlying hardware. Consequently, the guest

OS is unaware that it is being virtualized.

The

performance of full virtualization may not be ideal, because it involves binary

translation which is rather time-consuming. In particular, the full

virtualization of I/O-intensive applications is a really a big challenge.

Binary translation employs a code cache to store translated hot instructions to

improve performance, but it increases the cost of memory usage. At the time of

this writing, the performance of full virtualization on the x86 architecture is

typically 80 percent to 97 percent that of the host machine.

2.3

Host-Based Virtualization

An alternative VM

architecture is to install a virtualization layer on top of the host OS. This

host OS is still responsible for managing the hardware. The guest OSes are

installed and run on top of the virtualization layer. Dedicated applications

may run on the VMs. Certainly, some other applications

can also run with the host OS

directly. This host-based architecture has some distinct advantages, as

enumerated next. First, the user can install this VM architecture without

modifying the host OS. The virtualizing

software can rely on the host OS to provide device drivers and other low-level

services. This will simplify the VM design and ease its deployment.

Second, the host-based

approach appeals to many host machine configurations. Compared to the

hypervisor/VMM architecture, the performance of the host-based architecture may

also be low. When an application requests hardware access, it involves four

layers of mapping which downgrades performance significantly. When the ISA of a

guest OS is different from the ISA of the underlying hardware, binary

translation must be adopted. Although the host-based architecture has

flexibility, the performance is too low to be useful in practice.

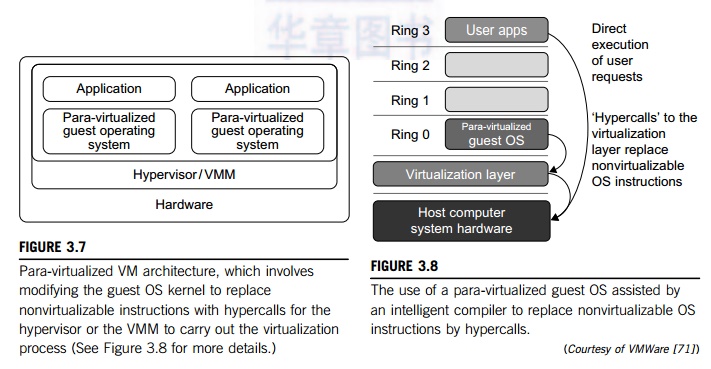

3. Para-Virtualization with Compiler

Support

Para-virtualization needs to modify the guest

operating systems. A para-virtualized VM provides special APIs requiring substantial OS

modifications in user applications. Performance degradation is a critical issue

of a virtualized system. No one wants to use a VM if it is much slower than

using a physical machine. The virtualization layer can be inserted at different

positions in a machine soft-ware stack. However, para-virtualization attempts

to reduce the virtualization overhead, and thus improve performance by

modifying only the guest OS kernel.

Figure

3.7 illustrates the concept of a paravirtualized VM architecture. The guest

operating systems are para-virtualized. They are assisted by an intelligent

compiler to replace the nonvirtualizable OS instructions by hypercalls as

illustrated in Figure 3.8. The traditional x86 processor offers four

instruction execution rings: Rings 0, 1, 2, and 3. The lower the ring number,

the higher the privilege of instruction being executed. The OS is responsible

for managing the hardware and the privileged instructions to execute at Ring

0, while user-level applications run at Ring 3. The best example of

para-virtualization is the KVM to be described below.

3.1

Para-Virtualization Architecture

When the x86 processor is

virtualized, a virtualization layer is inserted between the hardware and the

OS. According to the x86 ring definition, the virtualization layer should also

be installed at Ring 0. Different instructions at Ring 0 may cause some

problems. In Figure 3.8, we show that para-virtualization replaces

nonvirtualizable instructions with hypercalls that communicate directly with the hypervisor

or VMM. However, when the guest OS kernel is modified for virtualization, it

can no longer run on the hardware directly.

Although

para-virtualization reduces the overhead, it has incurred other problems.

First, its compatibility and portability may be in doubt, because it must

support the unmodified OS as well. Second, the cost of maintaining

para-virtualized OSes is high, because they may require deep OS kernel

modifications. Finally, the performance advantage of para-virtualization varies

greatly due to workload variations. Compared with full virtualization,

para-virtualization is relatively easy and more practical. The main problem in

full virtualization is its low performance in binary translation. To speed up

binary translation is difficult. Therefore, many virtualization products employ

the para-virtualization architecture. The popular Xen, KVM, and VMware ESX are

good examples.

3.2

KVM (Kernel-Based VM)

This is a Linux

para-virtualization system—a part of the Linux version

2.6.20 kernel. Memory management and scheduling activities are carried out by

the existing Linux kernel. The KVM does the rest, which makes it simpler than

the hypervisor that controls the entire machine. KVM is a hardware-assisted

para-virtualization tool, which improves performance and supports unmodified

guest OSes such as Windows, Linux, Solaris, and other UNIX variants.

3.3

Para-Virtualization with Compiler Support

Unlike the full

virtualization architecture which intercepts and emulates privileged and

sensitive instructions at runtime, para-virtualization handles these

instructions at compile time. The guest OS kernel is modified to replace the

privileged and sensitive instructions with hypercalls to the hypervi-sor or

VMM. Xen assumes such a para-virtualization architecture.

The

guest OS running in a guest domain may run at Ring 1 instead of at Ring 0. This

implies that the guest OS may not be able to execute some privileged and

sensitive instructions. The privileged instructions are implemented by

hypercalls to the hypervisor. After replacing the instructions with hypercalls,

the modified guest OS emulates the behavior of the original guest OS. On an

UNIX system, a system call involves an interrupt or service routine. The

hypercalls apply a dedicated service routine in Xen.

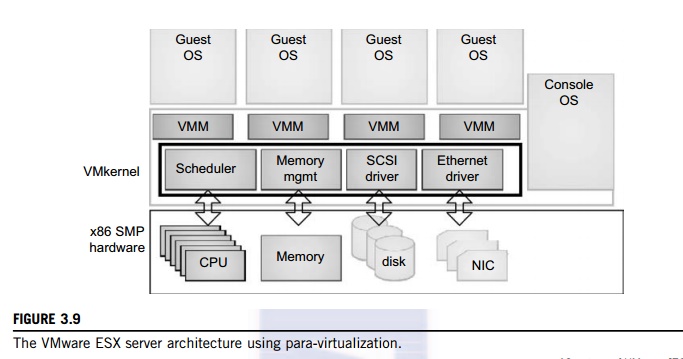

Example 3.3 VMware ESX Server for

Para-Virtualization

VMware

pioneered the software market for virtualization. The company has developed

virtualization tools for desktop systems and servers as well as virtual

infrastructure for large data centers. ESX is a VMM or a hypervisor for

bare-metal x86 symmetric multiprocessing (SMP) servers. It accesses hardware

resources such as I/O directly and has complete resource management control. An

ESX-enabled server consists of four components: a virtualization layer, a

resource manager, hardware interface components, and a service console, as

shown in Figure 3.9. To improve performance, the ESX server employs a

para-virtualization architecture in which the VM kernel interacts directly with

the hardware without involving the host OS.

The VMM layer virtualizes the physical hardware

resources such as CPU, memory, network and disk controllers, and human

interface devices. Every VM has its own set of virtual hardware resources. The

resource manager allocates CPU, memory disk, and network bandwidth and maps

them to the virtual hardware resource set of each VM created. Hardware

interface components are the device drivers and the

VMware

ESX Server File System. The service console is responsible for booting the

system, initiating the execution of the VMM and resource manager, and

relinquishing control to those layers. It also facilitates the process for

system administrators.

Related Topics