Chapter: Embedded Systems Design : Memory systems

Memory management

Memory management

Memory management used to be the preserve of worksta-tions and PCs where

it is used to help control and manage the resources within the system. It

inevitably caused memory access delays and extra cost and because of this, was

rarely used in embedded systems. Another reason was that many of the real-time

operating systems did not support it and without the soft-ware support, there

seemed little need to have it within the system. While some form of memory

management can be done in software, memory management is usually implemented

with additional hardware called a MMU (memory management unit) to meet at least

one of four system requirements:

1.

The need to extend the current addressing range.

The often perceived need for memory management is usu-ally the result of

prior experience or background, and centres on extending the current linear

addressing range. The Intel 80x86 architecture is based around a 64 kbyte

linear addressing segment which, while providing 8 bit compatibility, does

require memory management to provide the higher order address bits necessary to

extend the processor´s address space. Software must track ac-cesses that go

beyond this segment, and change the address accordingly. The M68000 family has

at least a 16 Mbyte addressing range and does not have this restriction. The

PowerPC family has an even larger 4 Gbyte range. The DSP56000 has a 128 kword

(1 word = 24 bits) address space, which is sufficient for most present day

applications, however, the intermittent delays that occur in servicing an MMU

can easily destroy the accuracy of the algo-rithms. For this reason, the linear

addressing range may increase, but it is unlikely that paged or segmented

addressing will appear in DSP applications.

2.

To remove the need to write relocatable or position-independent

software.

Many systems have multitasking operating systems where the software

environment consists of modular blocks of code running under the control of an

operating system. There are three ways of allocating memory to these blocks.

The first simply distributes blocks in a pre-defined way, i.e. task A is given

the memory block from $A0000 to $A8000, task B is given from $C0000 to $ D8000,

etc. With these addresses, the programmer can write the code to use this

memory. This is fine, providing the distribu-tion does not change and there is

sufficient design discipline to adhere to the plan. However, it does make all

the code hardware and position dependent. If another system has a slightly

different memory configuration, the code will not run correctly.

To overcome this problem, software can be written in such a way that it

is either relocatable or position independent. These two terms are often

interchanged but there is a difference: both can execute anywhere in the memory

map, but relocatable code must maintain the same address offsets between its

data and code segments. The main technique is to avoid the use of absolute

addressing modes, replacing them with relative addressing modes.

If this support is missing or the compiler technology cannot use it,

memory management must be used to translate the logical program addresses and

map them into physical memory. This effectively realigns the memory so that the

processor and software think that the memory is organized specially for them,

but in reality is totally different.

3.

To partition the system to protect it from other tasks, users, etc.

To provide stability within a multitasking or multiuser system, it is

advisable to partition the memory so that errors within one task do not corrupt

others. On a more general level, operating system resources may need separating

from applica-tions. The M68000 processor family can provide this partitioning

through the use of the function codes or by the combination of the

user/supervisor signals and Harvard architecture. This partition-ing is very

coarse, but is often all that is necessary in many cases. For finer grain

protection, memory management can be used to add extra description bits to an

address to declare its status. If a task attempts to access memory that has not

been allocated to it, or its status does not match (e.g. writing to a read only

declared memory location), the MMU can detect it and raise an error to the

supervisor level. This aspect is becoming more important and has even sprurred

manufacturers to define stripped down MMUs to provide this type of protection.

4.

To allow programs to access more memory than is physi-cally present in

the system.

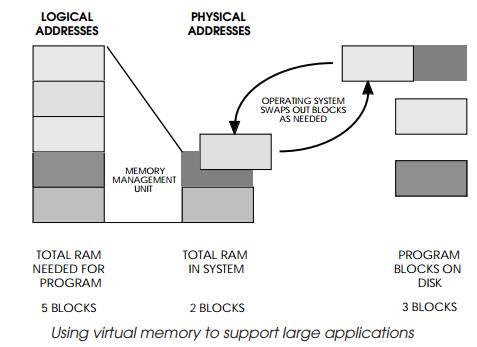

With the large linear addressing offered by today´s 32 bit

microprocessors, it is relatively easy to create large software applications

which consume vast quantities of memory. While it may be feasible to install 64

Mbytes of RAM in a workstation, the costs are expensive compared with a 64

Mbyte winchester disk. As the memory needs go up, this differential increases.

A solution is to use the disk storage as the main storage medium, divide the

stored program into small blocks and keep only the blocks in the processor

system memory that are needed.

As the program executes, the MMU can track how the program uses the

blocks, and swap them to and from the disk as needed. If a block is not present

in memory, this causes a page fault and forces some exception processing which

performs the swap-ping operation. In this way, the system appears to have large

amounts of system RAM when, in reality, it does not. This virtual memory

technique is frequently used in workstations and in the UNIX operating system.

Related Topics