Chapter: Artificial Intelligence

Back Propagation neural network

Back Propagation neural network

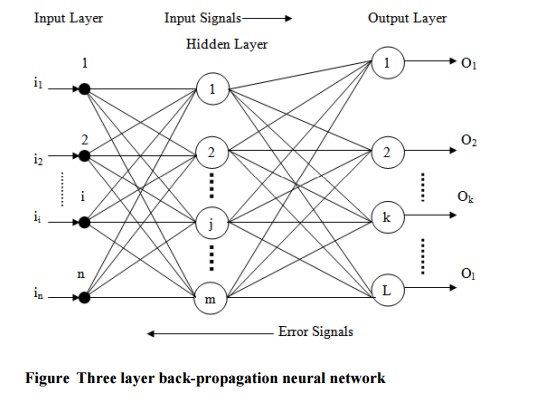

Multilayer neural networks use a most common technique from a variety of

learning technique, called the back propagation algorithm. In back propagation

neural network, the output values are compared with the correct answer to

compute the value of some predefined error function. By various techniques the

error is then fed back through the network. Using this information, the

algorithms adjust the weights of each connection in order to reduce the value of

the error function by some small amount. After repeating this process for a

sufficiently large number of training cycles the network will usually converge

to some state where the error of the calculation is small.

The goal of back propagation, as with most training algorithms, is to

iteratively adjust the weights in the network to produce the desired output by

minimizing the output error. The algorithm’s goal is to solve credit assignment

problem. Back propagation is a gradient-descent approach in that it uses the

minimization of first-order derivatives to find an optimal solution. The

standard back propagation algorithm is given below.

Step1:

Build a network with the choosen number of input, hidden and output

units.

Step2:

Initialize all the weights to low random values.

Step3:

Randomly, choose a single training pair.

Step4:

Copy the input pattern to the input layer.

Step5:

Cycle the network so that the activation from the inputs generates the

activations in the hidden and output layers.

Step6:

Calculate the error derivative between the output activation and the

final output.

Step7:

Apply the method of back propagation to the summed products of the

weights and errors in the output layer in order to calculate the error in the

hidden units.

Step8:

Update the weights attached the each unit according to the error in that

unit, the output from the unit below it and the learning parameters, until the

error is sufficiently low.

To derive the back propagation algorithm, let us consider the three layer

network shown in figure .

To propagate error signals, we start at the output layer and work

backward to the hidden layer. The error signal at the output of neuron k at

iteration x is defined as

Generally, computational learning theory is concerned with training

classifiers on a limited amount of data. In the context of neural networks a

simple heuristic, called early stopping often ensures that the network will

generalize well to examples not in the training set. There are some problems

with the back propagation algorithm like speed of convergence and the

possibility of ending up in a local minimum of the error function. Today there

are a variety of practical solutions that make back propagation in multilayer

perceptrons the solution of choice for many machine learning tasks.

Related Topics