Chapter: Artificial Intelligence

Search and Control Strategies

Search and Control Strategies:

Problem

solving is an important aspect of Artificial Intelligence. A problem can be

considered to consist of a goal and a set of actions that can be taken to lead

to the goal. At any given time, we consider the state of the search space to

represent where we have reached as a result of the actions we have applied so

far. For example, consider the problem of looking for a contact lens on a

football field. The initial state is how we start out, which is to say we know

that the lens is somewhere on the field, but we don’t know where. If we use the

representation where we examine the field in units of one square foot, then our

first action might be to examine the square in the top-left corner of the

field. If we do not find the lens there, we could consider the state now to be

that we have examined the top-left square and have not found the lens. After a

number of actions, the state might be that we have examined 500 squares, and we

have now just found the lens in the last square we examined. This is a goal

state because it satisfies the goal that we had of finding a contact lens.

Search is

a method that can be used by computers to examine a problem space like this in

order to find a goal. Often, we want to find the goal as quickly as possible or

without using too many resources. A problem space can also be considered to be

a search space because in order to solve the problem, we will search the space

for a goal state.We will continue to use the term search space to describe this

concept. In this chapter, we will look at a number of methods for examining a

search space. These methods are called search methods.

The

Importance of Search in AI

It has already become clear that many of the tasks

underlying AI can be phrased in terms of a search for the solution to the

problem at hand.

Many goal based agents are essentially problem

solving agents which must decide what to do by searching for a sequence of

actions that lead to their solutions.

For production systems, we have seen the need to

search for a sequence of rule applications that lead to the required fact or

action.

For neural network systems, we need to search for

the set of connection weights that will result in the required input to output

mapping.

Which

search algorithm one should use will generally depend on the problem domain?

There are four important factors to consider:

Completeness – Is a solution guaranteed to be found

if at least one solution exists?

Optimality – Is the solution found guaranteed to be

the best (or lowest cost) solution if there exists more than one solution?

Time Complexity – The upper bound on the time required

to find a solution, as a function of the complexity of the problem.

Space Complexity – The upper bound on the storage

space (memory) required at any point during the search, as a function of the

complexity of the problem.

Preliminary concepts

Two

varieties of space-for-time

algorithms:

Input enhancement — preprocess the input (or its

part) to store some info to be used later in solving the problem

o

Counting

for sorting

o

String

searching algorithms

Prestructuring — preprocess the input to make

accessing its elements easier

·

Hashing

§ Indexing

schemes (e.g., B-trees)

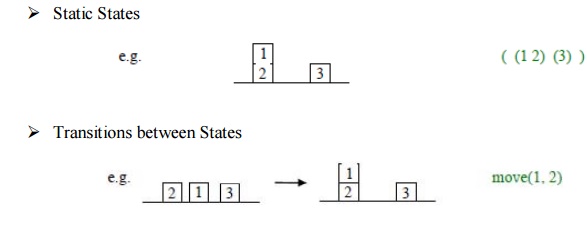

State Space Representations: The state

space is simply the space of all possible

states, or configurations, that our system may be in. Generally, of course,

we prefer to work with some convenient representation of that search space.

There are

two components to the representation of state spaces:

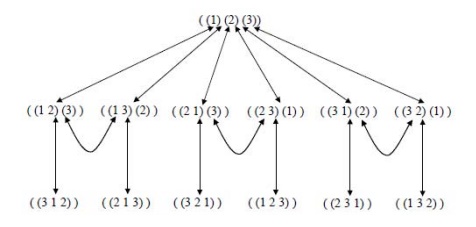

State Space Graphs: If the

number of possible states of the system is small enough, we can represent all of them, along with the transitions between

them, in a state space graph, e.g.

Routes through State Space: Our

general aim is to search for a route, or sequence of transitions, through the state space graph from our initial state

to a goal state.

Sometimes

there will be more than one possible goal state. We define a goal test to

determine if a goal state has been achieved.

The

solution can be represented as a sequence of link labels (or transitions) on

the state space graph. Note that the labels depend on the direction moved along

the link.

Sometimes

there may be more than one path to a goal state, and we may want to find the

optimal (best possible) path. We can define link costs and path costs for

measuring the cost of going along a particular path, e.g. the path cost may

just equal the number of links, or could be the sum of individual link costs.

For most

realistic problems, the state space graph will be too large for us to hold all

of it explicitly in memory at any one time.

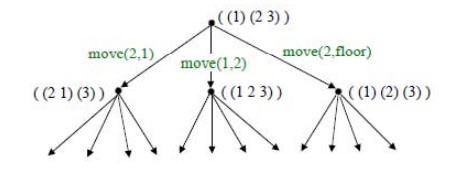

Search Trees: It is helpful to think of the

search process as building up a search tree

of routes through the state space graph. The root of the search tree is the

search node corresponding to the initial state.

The leaf

nodes correspond either to states that have not yet been expanded, or to states

that generated no further nodes when expanded.

Related Topics