Chapter: Artificial Intelligence

Semantic Analysis

Semantic Analysis

Semantics is the study of the meaning of words, and

semantic analysis is the analysis we use to extract meaning from utterances.

Semantic analysis involves building up a

representation of the objects and actions that a sentence is describing,

including details provided by adjectives, adverbs, and prepositions. Hence,

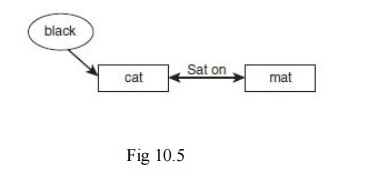

after analyzing the sentence The black cat sat on the mat, the system would use

a semantic net such as the one shown in Figure 10.5 to represent the objects

and the relationships between them.

A more sophisticated semantic network

is likely to be formed, which includes information about the nature of a cat (a

cat is an object, an animal, a quadruped, etc.) that can be used to deduce

facts about the cat (e.g., that it likes to drink milk).

Ambiguity and Pragmatic Analysis

One of the main differences between

natural languages and formal languages like C++ is that a sentence in a natural

language can have more than one meaning. This is ambiguity—the fact that a

sentence can be interpreted in different ways depending on who is speaking, the

context in which it is spoken, and a number of other factors.

The more common forms of ambiguity

and look at ways in which a natural language processing system can make

sensible decisions about how to disambiguate them.

Lexical ambiguity occurs when a word

has more than one possible meaning. For example, a bat can be a flying mammal

or a piece of sporting equipment. The word set is an interesting example of

this because it can be used as a verb, a noun, an adjective, or an adverb.

Determining which part of speech is intended can often be achieved by a parser

in cases where only one analysis is possible, but in other cases semantic

disambiguation is needed to determine which meaning is intended.

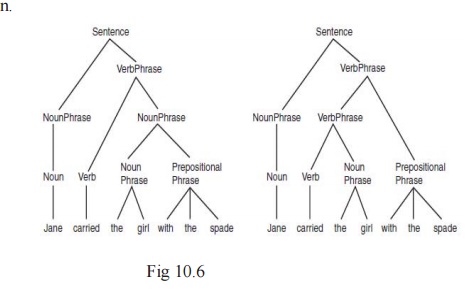

Syntactic ambiguity occurs when there

is more than one possible parse of a sentence. The sentence Jane carried the

girl with the spade could be interpreted in two different ways, as is shown in

the two parse trees in Fig 10.6. In the first of the two parse trees in Fig

10.6, the prepositional phrase with the spade is applied to the noun phrase the

girl, indicating that it was the girl who had a spade that Jane carried. In the

second sentence, the prepositional phrase has been attached to the verb phrase

carried the girl, indicating that Jane somehow used the spade to carry the

girl.

Semantic ambiguity occurs when a

sentence has more than one possible meaning—often as a result of a syntactic

ambiguity. In the example shown in Fig 10.6 for example, the sentence Jane

carried the girl with the spade, the sentence has two different parses, which

correspond to two possible meanings for the sentence. The significance of this

becomes clearer for practical systems if we imagine a robot that receives vocal

instructions from a human.

Referential ambiguity occurs when we use anaphoric

expressions, or pronouns to refer to objects that have already been discussed.

An anaphora occurs when a word or phrase is used to refer to something without

naming it. The problem of ambiguity occurs where it is not immediately clear

which object is being referred to. For example, consider the following

sentences:

John gave Bob the sandwich. He smiled.

It is not at all clear from this who smiled—it

could have been John or Bob. In general, English speakers or writers avoid

constructions such as this to avoid humans becoming confused by the ambiguity.

In spite of this, ambiguity can also occur in a similar way where a human would

not have a problem, such as

John gave the dog the sandwich. It wagged its tail.

In this case, a human listener would know very well

that it was the dog that wagged its tail, and not the sandwich. Without

specific world knowledge, the natural language processing system might not find

it so obvious.

A local ambiguity occurs when a part of a sentence

is ambiguous; however, when the whole sentence is examined, the ambiguity is

resolved. For example, in the sentence There are longer rivers than the Thames,

the phrase longer rivers is ambiguous until we read the rest of the sentence,

than the Thames.

Another cause of ambiguity in human language is

vagueness. we examined fuzzy logic, words such as tall, high, and fast are vague and do not have precise

numeric meanings.

The process by which a natural

language processing system determines which meaning is intended by an ambiguous

utterance is known as disambiguation.

Disambiguation can be done in a

number of ways. One of the most effective ways to overcome many forms of

ambiguity is to use probability.

This can be done using prior

probabilities or conditional probabilities. Prior probability might be used to

tell the system that the word bat nearly always means a piece of sporting

equipment.

Conditional probability would tell it

that when the word bat is used by a sports fan, this is likely to be the case,

but that when it is spoken by a naturalist it is more likely to be a winged

mammal.

Context is also an extremely

important tool in disambiguation. Consider the following sentences:

I went into the cave. It was full of

bats.

I looked in the locker. It was full

of bats.

In each case, the second sentence is

the same, but the context provided by the first sentence helps us to choose the

correct meaning of the word “bat” in each case.

Disambiguation thus requires a good

world model, which contains knowledge about the world that can be used to

determine the most likely meaning of a given word or sentence. The world model

would help the system to understand that the sentence Jane carried the girl

with the spade is unlikely to mean that Jane used the spade to carry

the girl because spades are usually used to carry

smaller things than girls. The challenge, of course, is to encode this

knowledge in a way that can be used effectively and efficiently by the system.

The world model needs to be as broad as the

sentences the system is likely to hear. For example, a natural language

processing system devoted to answering sports questions might not need to know

how to disambiguate the sporting bat from the winged mammal, but a system designed

to answer any type of question would.

Related Topics