Chapter: Biochemistry: Biochemistry and the Organization of Cells

Predicting Reactions

Predicting Reactions

Let us

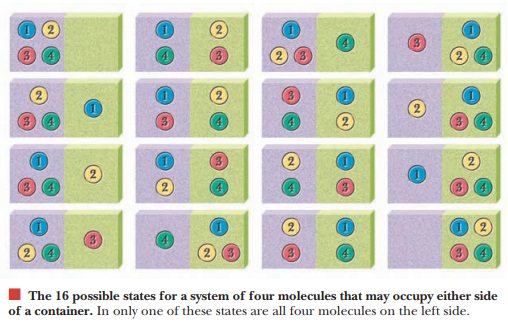

consider a very simple system to illustrate the concept of entropy. We place

four molecules in a con-tainer. There is an equal chance that each molecule

will be on the left or on the right side of the container. Mathematically

stated, the probability of finding a

given molecule on one side is 1/2. We can express any prob-ability as a

fraction ranging from 0 (impossible) to 1 (completely certain). We can see that

16 possible ways exist to arrange the four molecules in the container. In only

one of these will all four molecules lie on the left side, but six possible

arrangements exist with the four molecules evenly distributed between the two

sides. Aless ordered (more dispersed)

arrangement is more probable than a highly ordered arrangement. Entropy is

definedin terms of the number of possible arrangements of molecules.

Boltzmann’s

equation for entropy, S, is S = k

ln W. In this equation, the term W represents the number of possible arrangements

of molecules, ln is the logarithm to the base e, and k is the constant

universally referred to as Boltzmann’s constant. It is equal to R/N

where R is the gas constant and N is Avogadro’s number (6.02 × 1023), the

number of molecules in a mole.

Related Topics