Chapter: Cryptography and Network Security

Intrusion Detection

INTRUSION DETECTION:

Inevitably,

the best intrusion prevention system will fail. A system's second line of

defense is intrusion detection, and this has been the focus of much research in

recent years. This interest is motivated by a number of considerations,

including the following:

·

If an intrusion is detected quickly enough, the

intruder can be identified and ejected from the system before any damage is

done or any data are compromised.

·

An effective intrusion detection system can serve

as a deterrent, so acting to prevent intrusions.

·

Intrusion detection enables the collection of

information about intrusion techniques that can be used to strengthen the

intrusion prevention facility.

Intrusion

detection is based on the assumption that the behavior of the intruder differs

from that of a legitimate user in ways that can be quantified.

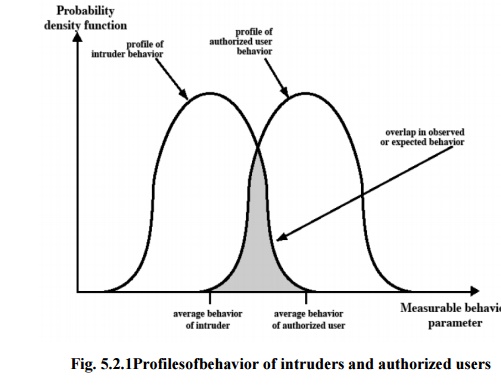

Figure 5.2.1 suggests,

in very abstract terms, the nature of the task confronting the designer of an

intrusion detection system. Although the typical behavior of an intruder

differs from the typical behavior of an authorized user, there is an overlap in

these behaviors. Thus, a loose interpretation of intruder behavior, which will

catch more intruders, will also lead to a number of "false positives,"

or authorized users identified as intruders. On the other hand, an attempt to

limit false positives by a tight interpretation of intruder behavior will lead

to an increase in false negatives, or intruders not identified as intruders.

Thus, there is an element of compromise and art in the practice of intrusion

detection.

1. The approaches to intrusion

detection:

Statistical anomaly detection:

Involves the collection of data relating to the behavior of legitimate users

over a period of time. Then statistical tests are applied to observed behavior

to determine with a high level of confidence whether that behavior is not

legitimate user behavior.

Threshold detection: This

approach involves defining thresholds, independent of user, for the frequency of occurrence of various events.

Profile based: A profile

of the activity of each user is developed and used to detect changes in the behavior of individual

accounts.

j) Rule-based detection: Involves an

attempt to define a set of rules that can be used to decide that a given

behavior is that of an intruder.

Anomaly detection: Rules

are developed to detect deviation from previous usage patterns.

Penetration identification: An

expert system approach that searches for

suspicious behavior.

In terms

of the types of attackers listed earlier, statistical anomaly detection is

effective against masqueraders. On the other hand, such techniques may be

unable to deal with misfeasors. For such attacks, rule-based approaches may be

able to recognize events and sequences that, in context, reveal penetration. In

practice, a system may exhibit a combination of both approaches to be effective

against a broad range of attacks.

Audit Records

A

fundamental tool for intrusion detection is the audit record. Some record of

ongoing activity by users must be maintained as input to an intrusion detection

system. Basically, two plans are used:

·

Native

audit records: Virtually all multiuser operating systems include

accounting software that collects

information on user activity. The advantage of using this information is that

no additional collection software is needed. The disadvantage is that the

native audit records

·

may not contain the needed information or may not

contain it in a convenient form.

·

·

Detection-specific

audit records: A collection facility can be implemented that

generates audit records containing

only that information required by the intrusion detection system. One advantage

of such an approach is that it could be made vendor independent and ported to a

variety of systems. The disadvantage is the extra overhead involved in having,

in effect, two accounting packages running on a machine.

Each audit record contains the following fields:

·

Subject: Initiators

of actions. A subject is typically a terminal user but might also be a

o

process acting on behalf of users or groups of

users.

·

·

Object: Receptors

of actions. Examples include files, programs, messages, records, terminals, printers, and user- or

program-created structures

·

7. Resource-Usage:

A list of quantitative elements in which each element gives the amount used of some resource (e.g., number of

lines printed or displayed, number of records read

o

or written, processor time, I/O units used, session

elapsed time).

·

·

8. Time-Stamp: Unique time-and-date stamp

identifying when the action took place. Most user operations are made up

of a number of elementary actions. For example, a file copy involves the

execution of the user command, which includes doing access validation and

setting up the copy, plus the read from one file, plus the write to another

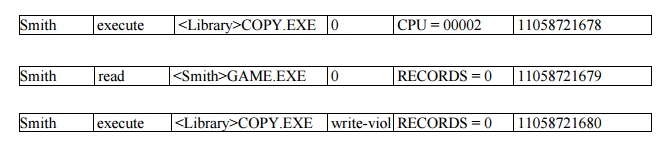

file. Consider the command

COPY GAME.EXE

TO <Library>GAME.EXE

issued by

Smith to copy an executable file GAME from the current directory to the

<Library> directory. The following audit records may be generated:

In this

case, the copy is aborted because Smith does not have write permission to

<Library>. The decomposition of a user operation into elementary actions

has three advantages:

Because

objects are the protectable entities in a system, the use of elementary actions

enables an audit of all behavior affecting an object. Thus, the system can

detect attempted subversions of access

Single-object,

single-action audit records simplify the model and the implementation.

Because

of the simple, uniform structure of the detection-specific audit records, it

may be relatively easy to obtain this information or at least part of it by a

straightforward mapping from existing native audit records to the detection-specific

audit records.

1.1 Statistical Anomaly Detection:

As was

mentioned, statistical anomaly detection techniques fall into two broad

categories: threshold detection and profile-based systems. Threshold detection involves counting the number of occurrences of

a specific event type over an interval of time. If the count surpasses what is

considered a reasonable number that one might expect to occur, then intrusion

is assumed.

Threshold

analysis, by itself, is a crude and ineffective detector of even moderately

sophisticated attacks. Both the threshold and the time interval must be

determined.

1.2 Profile-based anomaly detection

focuses on characterizing the past behavior of individual users or related groups of users and then detecting

significant deviations. A profile may consist of a set of parameters, so that

deviation on just a single parameter may not be sufficient in itself to signal

an alert.

The

foundation of this approach is an analysis of audit records. The audit records

provide input to the intrusion detection function in two ways. First, the

designer must decide on a number of quantitative metrics that can be used to

measure user behavior. Examples of metrics that are useful for profile-based

intrusion detection are the following:

·

Counter: A

nonnegative integer that may be incremented but not decremented until it is reset by management action. Typically,

a count of certain event types is kept over a particular period of time.

Examples include the number of logins by a single user during an hour, the

number of times a given command is executed during a single user session, and

the number of password failures during a minute.

·

Gauge: A

nonnegative integer that may be incremented or decremented. Typically, a gauge is used to measure the current

value of some entity. Examples include the number of logical connections

assigned to a user application and the number of outgoing messages queued for a

user process.

·

Interval

timer: The length of time between two related events. An example is the

length of time between successive

logins to an account.

·

Resource

utilization: Quantity of resources consumed during a specified

period. Examples include the number

of pages printed during a user session and total time consumed by a program

execution.

Given

these general metrics, various tests can be performed to determine whether

current activity fits within acceptable limits.

· Mean and

standard deviation

· Multivariate

· Markov

process

· Time

series

· Operational

The

simplest statistical test is to measure the mean and standard deviation of a

parameter over some historical period. This gives a reflection of the average

behavior and its variability.

A

multivariate model is based on correlations between two or more variables.

Intruder behavior may be characterized with greater confidence by considering

such correlations (for example, processor time and resource usage, or login

frequency and session elapsed time).

A Markov

process model is used to establish transition probabilities among various

states. As an example, this model might be used to look at transitions between

certain commands.

A time

series model focuses on time intervals, looking for sequences of events that

happen too rapidly or too slowly. A variety of statistical tests can be applied

to characterize abnormal timing.

Finally,

an operational model is based on a judgment of what is considered abnormal,

rather than an automated analysis of past audit records. Typically, fixed

limits are defined and intrusion is suspected for an observation that is

outside the limits.

1.3 Rule-Based Intrusion Detection

Rule-based

techniques detect intrusion by observing events in the system and applying a

set of rules that lead to a decision regarding whether a given pattern of

activity is or is not suspicious.

Rule-based anomaly detection is

similar in terms of its approach and strengths to statistical anomaly detection. With the rule-based

approach, historical audit records are analyzed to identify usage patterns and

to generate automatically rules that describe those patterns. Rules may

represent past behavior patterns of users, programs, privileges, time slots,

terminals, and so on. Current behavior is then observed, and each transaction

is matched against the set of rules to determine if it conforms to any

historically observed pattern of behavior.

As with

statistical anomaly detection, rule-based anomaly detection does not require

knowledge of security vulnerabilities within the system. Rather, the scheme is

based on observing past behavior and, in effect, assuming that the future will

be like the past

Rule-based penetration identification takes a

very different approach to intrusion detection, one based on expert system technology. The key feature of such

systems is the use of rules for identifying known penetrations or penetrations

that would exploit known weaknesses.

Example

heuristics are the following:

o

Users should not read files in other users'

personal directories.

o

Users must not write other users' files.

o

Users who log in after hours often access the same

files they used earlier.

o

Users do not generally open disk devices directly

but rely on higher-level operating system utilities.

o

Users should not be logged in more than once to the

same system.

o

Users do not make copies of system programs.

2 The Base-Rate Fallacy

To be of

practical use, an intrusion detection system should detect a substantial

percentage of intrusions while keeping the false alarm rate at an acceptable

level. If only a modest percentage of actual intrusions are detected, the

system provides a false sense of security. On the other hand, if the system

frequently triggers an alert when there is no intrusion (a false alarm), then

either system managers will begin to ignore the alarms, or much time will be

wasted analyzing the false alarms.

Unfortunately,

because of the nature of the probabilities involved, it is very difficult to

meet the standard of high rate of detections with a low rate of false alarms.

In general, if the actual numbers of intrusions is low compared to the number

of legitimate uses of a system, then the false alarm rate will be high unless

the test is extremely discriminating.

3 Distributed Intrusion Detection

Until

recently, work on intrusion detection systems focused on single-system stand-alone

facilities. The typical organization, however, needs to defend a distributed

collection of hosts supported by a LAN Porras points out the following major

issues in the design of a distributed intrusion detection system

A

distributed intrusion detection system may need to deal with different audit

record formats. In a heterogeneous environment, different systems will employ

different native audit collection systems and, if using intrusion detection,

may employ different formats for security-related audit records.

One or

more nodes in the network will serve as collection and analysis points for the

data from the systems on the network. Thus, either raw audit data or summary

data must be transmitted across the network. Therefore, there is a requirement

to assure the integrity and confidentiality of these data.

Either a

centralized or decentralized architecture can be used.

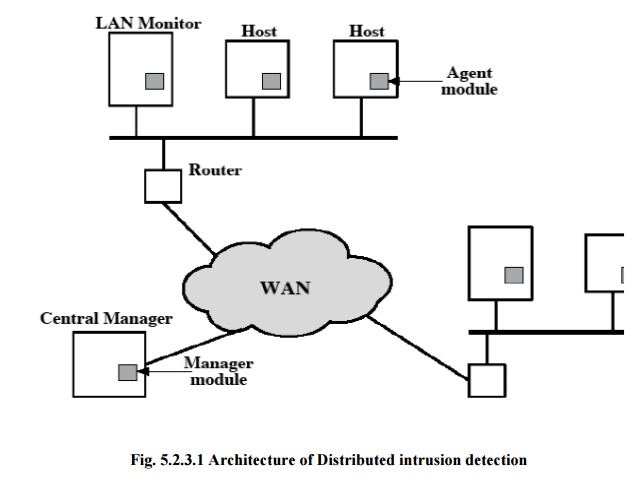

Below

figure shows the overall architecture, which consists of three main components:

·

Host

agent module: An audit collection module operating as a

background process on a monitored

system. Its purpose is to collect data on security-related events on the host

and transmit these to the central manager.

·

·

LAN

monitor agent module: Operates in the same fashion as a host agent module

except that it analyzes LAN traffic and reports the results to the central

manager.

·

·

Central

manager module: Receives reports from LAN monitor and host agents

and processes and correlates these

reports to detect intrusion.

The

scheme is designed to be independent of any operating system or system auditing

implementation.

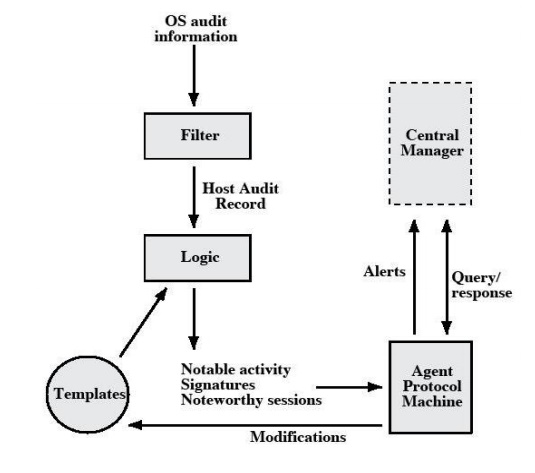

·

The agent captures each audit record produced by

the native audit collection system.

·

A filter is applied that retains only those records

that are of security interest.

·

These records are then reformatted into a

standardized format referred to as the host audit record (HAR).

·

Next, a template-driven logic module analyzes the

records for suspicious activity.

·

At the lowestlevel, the agent scans for notable

events that are of interest independent of any past

events.

·

Examplesinclude failed file accesses, accessing

system files, and changing a file's access control.

·

At the next higher level, the agent looks for

sequences of events, such as known attack atterns (signatures).

·

Finally, the agent looks for anomalous behavior of

an individual user based on a historical profile of that user, such as number

of programs executed, number of files accessed, and the like.

·

When suspicious activity is detected, an alert is

sent to the central manager.

·

The central manager includes an expert system that

can draw inferences from received data.

·

The manager may also query individual systems for

copies of HARs to correlate with those from other agents.

·

The LAN monitor agent also supplies information to

the central manager.

·

The LAN monitor agent audits host-host connections,

services used, and volume of traffic.

·

It

searches for significant

events, such as

sudden changes in network load,

the use of

·

security-related services, and network activities

such as rlogin.

The

architecture is quite general and flexible. It offers a foundation for a

machine-independent approach that can expand from stand-alone intrusion

detection to a system that is able to correlate activity from a number of sites

and networks to detect suspicious activity that would otherwise remain

undetected.

4 Honeypots

A

relatively recent innovation in intrusion detection technology is the honeypot.

Honeypots are decoy systems that are designed to lure a potential attacker away

from critical systems. Honeypots are designed to

· divert an

attacker from accessing critical systems

·

collect information about the attacker's activity

·

encourage the attacker to stay on the system long

enough for administrators to respond

These

systems are filled with fabricated information designed to appear valuable but

that a legitimate user of the system wouldn't access. Thus, any access to the

honeypot is suspect.

5 Intrusion Detection Exchange Format

To

facilitate the development of distributed intrusion detection systems that can

function across a wide range of platforms and environments, standards are

needed to support interoperability. Such standards are the focus of the IETF

Intrusion Detection Working Group.

The

outputs of this working group include the following:

a.

A requirements document, which describes the

high-level functional requirements for communication between intrusion

detection systems and with management systems, including the rationale for

those requirements.

b.

A common intrusion language specification, which

describes data formats that satisfy the requirements.

c.

A framework document, which identifies existing

protocols best used for communication between intrusion detection systems, and describes

how the devised data formats relate to them.

Related Topics