Chapter: Security in Computing : Designing Trusted Operating Systems

What Is a Trusted System?

What Is a Trusted System?

Before we begin to examine a

trusted operating system in detail, let us look more carefully at the

terminology involved in understanding and describing trust. What would it take

for us to consider something secure? The word secure reflects a dichotomy:

Something is either secure or not secure. If secure, it should withstand all

attacks, today, tomorrow, and a century from now. And if we claim that it is

secure, you either accept our assertion (and buy and use it) or reject it (and

either do not use it or use it but do not trust it). How does security differ

from quality? If we claim that something is good, you are less interested in

our claims and more interested in an objective appraisal of whether the thing

meets your performance and functionality needs. From this perspective, security

is only one facet of goodness or quality; you may choose to balance security

with other characteristics (such as speed or user friendliness) to select a

system that is best, given the choices you may have. In particular, the system

you build or select may be pretty good, even though it may not be as secure as

you would like it to be.

We say that software is

trusted software if we know that the code has been rigorously developed and

analyzed, giving us reason to trust that the code does what it is expected to

do and nothing more. Typically, trusted code can be a foundation on which

other, untrusted, code runs. That is, the untrusted system's quality depends,

in part, on the trusted code; the trusted code establishes the baseline for

security of the overall system. In particular, an operating system can be

trusted software when there is a basis for trusting that it correctly controls

the accesses of components or systems run from it. For example, the operating

system might be expected to limit users' accesses to certain files.

To trust any program, we base

our trust on rigorous analysis and testing, looking for certain key

characteristics:

·

Functional correctness. The program does what it is supposed to,

and it works correctly.

·

Enforcement of integrity. Even if presented erroneous commands or

commands from unauthorized users, the program maintains the correctness of the

data with which it has contact.

·

Limited privilege: The program is allowed to access secure data,

but the access is minimized and neither the access rights nor the data are passed

along to other untrusted programs or back to an untrusted caller.

·

Appropriate confidence level. The program has been examined and

rated at a degree of trust appropriate for the kind of data and environment in

which it is to be used.

Trusted software is often

used as a safe way for general users to access sensitive data. Trusted programs

are used to perform limited (safe) operations for users without allowing the

users to have direct access to sensitive data.

Security professionals prefer

to speak of trusted instead of secure operating systems. A trusted system

connotes one that meets the intended security requirements, is of high enough

quality, and justifies the user's confidence in that quality. That is, trust is

perceived by the system's receiver or user, not by its developer, designer, or

manufacturer. As a user, you may not be able to evaluate that trust directly.

You may trust the design, a professional evaluation, or the opinion of a valued

colleague. But in the end, it is your responsibility to sanction the degree of

trust you require.

It is important to realize

that there can be degrees of trust; unlike security, trust is not a dichotomy.

For example, you trust certain friends with deep secrets, but you trust others

only to give you the time of day. Trust is a characteristic that often grows

over time, in accordance with evidence and experience. For instance, banks

increase their trust in borrowers as the borrowers repay loans as expected;

borrowers with good trust (credit) records can borrow larger amounts. Finally,

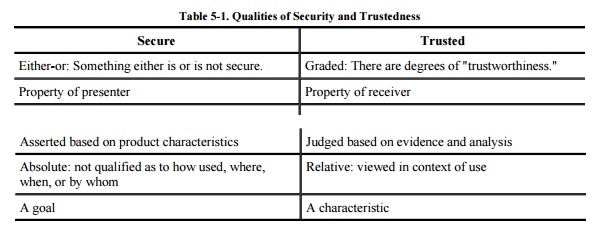

trust is earned, not claimed or conferred. The comparison in Table 5-1 highlights some of these distinctions.

The adjective trusted appears

many times in this chapter, as in trusted

process (a process that can affect system security, or a process whose

incorrect or malicious execution is capable of violating system security

policy), trusted product (an

evaluated and approved product), trusted

software (the software portion of a system that can be relied upon to

enforce security policy), trusted

computing base (the set of all protection mechanisms within a computing

system, including hardware, firmware, and software, that together enforce a

unified security policy over a product or system), or trusted system (a system that employs sufficient hardware and

software integrity measures to allow its use for processing sensitive

information). These definitions are paraphrased from [NIS91b]. Common to these definitions are the concepts of

enforcement of security policy

sufficiency of measures and mechanisms

evaluation

In studying trusted operating systems, we

examine closely what makes them trustworthy.

Related Topics