Chapter: Security in Computing : Designing Trusted Operating Systems

Security Features of Trusted Operating Systems

Security Features of Trusted Operating Systems

Unlike regular operating systems, trusted

systems incorporate technology to address both features and assurance. The

design of a trusted system is delicate, involving selection of an appropriate

and consistent set of features together with an appropriate degree of assurance

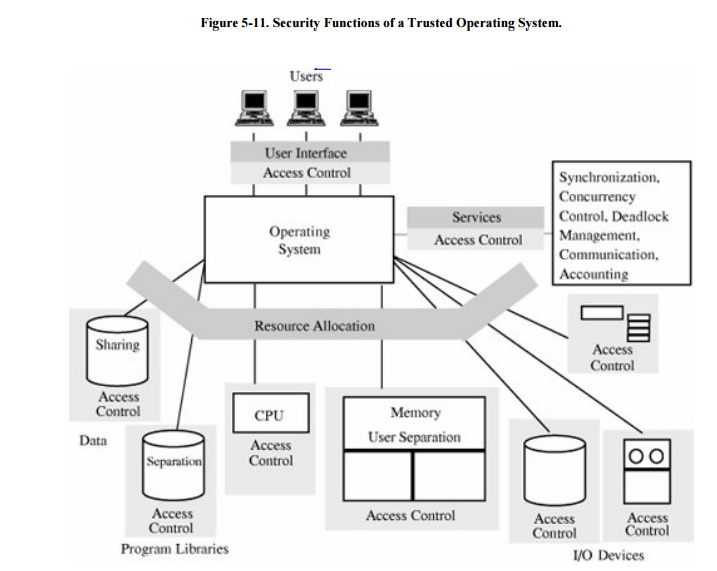

that the features have been assembled and implemented correctly. Figure 5-11 illustrates how a trusted operating

system differs from an ordinary one. Compare it with Figure 5-10. Notice how objects are accompanied or surrounded

by an access control mechanism, offering far more protection and separation

than does a conventional operating system. In addition, memory is separated by

user, and data and program libraries have controlled sharing and separation.

In this section, we consider

in more detail the key features of a trusted operating system, including

user identification and

authentication

mandatory access control

discretionary access control

object reuse protection

complete mediation

trusted path

audit

audit log reduction

intrusion detection

We consider each of these

features in turn.

Identification and Authentication

Identification is at the root

of much of computer security. We must be able to tell who is requesting access

to an object, and we must be able to verify the subject's identity. As we see

shortly, most access control, whether mandatory or discretionary, is based on

accurate identification. Thus as described in Chapter

4, identification involves two steps: finding out who the access

requester is and verifying that the requester is indeed who he/she/it claims to

be. That is, we want to establish an identity and then authenticate or verify

that identity. Trusted operating systems require secure identification of

individuals, and each individual must be uniquely identified.

Mandatory and Discretionary Access Control

Mandatory access control (MAC) means that access control policy

decisions are made beyond the control of the individual owner of an object. A central authority determines

what information is to be accessible by whom, and the user cannot change access

rights. An example of MAC occurs in military security, where an individual data

owner does not decide who has a top-secret clearance; neither can the owner

change the classification of an object from top secret to secret.

By contrast, discretionary access control (DAC), as

its name implies, leaves a certain amount of access control to the discretion

of the object's owner or to anyone else who is authorized to control the object's

access. The owner can determine who should have access rights to an object and

what those rights should be. Commercial environments typically use DAC to allow

anyone in a designated group, and sometimes additional named individuals, to

change access. For example, a corporation might establish access controls so

that the accounting group can have access to personnel files. But the

corporation may also allow Ana and Jose to access those files, too, in their

roles as directors of the Inspector General's office. Typically, DAC access

rights can change dynamically. The owner of the accounting file may add Renee

and remove Walter from the list of allowed accessors, as business needs

dictate.

MAC and DAC can both be

applied to the same object. MAC has precedence over DAC, meaning that of all

those who are approved for MAC access, only those who also pass DAC will

actually be allowed to access the object. For example, a file may be classified

secret, meaning that only people cleared for secret access can potentially

access the file. But of those millions of people granted secret access by the

government, only people on project "deer park" or in the

"environmental" group or at location "Fort Hamilton" are

actually allowed access.

Object Reuse Protection

One way that a computing

system maintains its efficiency is to reuse objects. The operating system

controls resource allocation, and as a resource is freed for use by other users

or programs, the operating system permits the next user or program to access the

resource. But reusable objects must be carefully controlled, lest they create a

serious vulnerability. To see why, consider what happens when a new file is

created. Usually, space for the file comes from a pool of freed, previously

used space on a disk or other storage device. Released space is returned to the

pool "dirty," that is, still containing the data from the previous

user. Because most users would write to a file before trying to read from it,

the new user's data obliterate the previous owner's, so there is no

inappropriate disclosure of the previous user's information. However, a

malicious user may claim a large amount of disk space and then scavenge for

sensitive data. This kind of attack is called object reuse . The

problem is not limited to disk; it can occur with main memory, processor

registers and storage, other magnetic media (such as disks and tapes), or any other reusable storage medium.

To prevent object reuse leakage, operating

systems clear (that is, overwrite) all space to be reassigned before allowing

the next user to have access to it. Magnetic media are particularly vulnerable

to this threat. Very precise and expensive equipment can sometimes separate the

most recent data from the data previously recorded, from the data before that,

and so forth. This threat, called magnetic

remanence, is beyond the scope of this book. For more information, see [NCS91a]. In any case, the operating system must

take responsibility for "cleaning" the resource before permitting

access to it. (See Sidebar 5-4 for a

different kind of persistent data.)

Complete Mediation

For mandatory or

discretionary access control to be effective, all accesses must be controlled.

It is insufficient to control access only to files if the attack will acquire

access through memory or an outside port or a network or a covert channel. The

design and implementation difficulty of a trusted operating system rises

significantly as more paths for access must be controlled. Highly trusted

operating systems perform complete mediation, meaning that all accesses are

checked.

Trusted Path

One way for a malicious user to gain

inappropriate access is to "spoof" users, making them think they are

communicating with a legitimate security enforcement system when in fact their

keystrokes and commands are being intercepted and analyzed. For example, a

malicious spoofer may place a phony user ID and password system between the

user and the legitimate system. As the illegal system queries the user for

identification information, the spoofer captures the real user ID and password;

the spoofer can use these bona fide entry data to access the system later on,

probably with malicious intent. Thus, for critical operations such as setting a

password or changing access permissions, users want an unmistakable

communication, called a trusted path,

to ensure that they are supplying protected information only to a legitimate

receiver. On some trusted systems, the user invokes a trusted path by pressing

a unique key sequence that, by design, is intercepted directly by the security

enforcement software; on other trusted systems, security-relevant changes can

be made only at system startup, before any processes other than the security

enforcement code run.

Sidebar

5-4: Hidden, But Not Forgotten

When is something gone? When you press

the delete key, it goes away, right? Wrong.

By now you know that deleted files are

not really deleted; they are moved to the recycle bin. Deleted mail messages go

to the trash folder. And temporary Internet pages hang around for a few days

waiting for repeat interest. But you sort of expect keystrokes to disappear

with the delete key.

Microsoft Word saves all changes and

comments since a document was created. Suppose you and a colleague collaborate

on a document, you refer to someone else's work, and your colleague inserts the

comment "this research is rubbish." You concur, so you delete the

reference and your colleague's comment. Then you submit the paper to a journal

for review and, as luck would have it, your paper is sent to the author whose

work you disparaged. Then the author turns on change marking and finds not just

the deleted reference but the deletion of your colleague's comment. (See [BYE04].)

If you really wanted to remove that text, you should have used the Microsoft

Hidden Data Removal Tool. (Of course, inspecting the file with a binary editor

is the only way you can be sure the offending text is truly gone.)

The Adobe PDF document format is a

simpler format intended to provide a platform-independent way to display (and

print) documents. Some people convert a Word document to PDF to eliminate

hidden sensitive data. That does remove the change- tracking data; but it

preserves even invisible output. Some people create a white box to paste over

data to be hidden, for example, to cut out part of a map or to hide a profit

column in a table. When you print the file, the box hides your sensitive

information. But the PDF format preserves all layers in a document, so your

recipient can effectively peel off the white box to reveal the hidden content.

The NSA issued a report detailing steps to ensure that deletions are truly

deleted [NSA05].

Or if you

want to show that something was there and has been deleted, you can do that

with the Microsoft Redaction Tool, which, presumably, deletes the underlying

text and replaces it with a thick black line.

Accountability and Audit

A security-relevant action

may be as simple as an individual access to an object, such as a file, or it

may be as major as a change to the central access control database affecting

all subsequent accesses. Accountability usually entails maintaining a log of

security-relevant events that have occurred, listing each event and the person

responsible for the addition, deletion, or change. This audit log must obviously

be protected from outsiders, and every security-relevant event must be

recorded.

Audit Log Reduction

Theoretically, the general

notion of an audit log is appealing because it allows responsible parties to

evaluate all actions that affect all protected elements of the system. But in

practice an audit log may be too difficult to handle, owing to volume and

analysis. To see why, consider what information would have to be collected and

analyzed. In the extreme (such as where the data involved can affect a

business' viability or a nation's security), we might argue that every

modification or even each character read from a file is potentially security

relevant; the modification could affect the integrity of data, or the single

character could divulge the only really sensitive part of an entire file. And

because the path of control through a program is affected by the data the

program processes, the sequence of individual instructions is also potentially

security relevant. If an audit record were to be created for every access to a

single character from a file and for every instruction executed, the audit log

would be enormous. (In fact, it would be impossible to audit every instruction,

because then the audit commands themselves would have to be audited. In turn,

these commands would be implemented by instructions that would have to be

audited, and so on forever.)

In most trusted systems, the

problem is simplified by an audit of only the opening (first access to) and

closing of (last access to) files or similar objects. Similarly, objects such

as individual memory locations, hardware registers, and instructions are not

audited. Even with these restrictions, audit logs tend to be very large. Even a

simple word processor may open fifty or more support modules (separate files)

when it begins, it may create and delete a dozen or more temporary files during

execution, and it may open many more drivers to handle specific tasks such as

complex formatting or printing. Thus, one simple program can easily cause a hundred

files to be opened and closed, and complex systems can cause thousands of files

to be accessed in a relatively short time. On the other hand, some systems

continuously read from or update a single file. A bank teller may process

transactions against the general customer accounts file throughout the entire

day; what is significant is not that the teller accessed the accounts file, but

which entries in the file were accessed. Thus, audit at the level of file

opening and closing is in some cases too much data and in other cases not

enough to meet security needs.

A final difficulty is the

"needle in a haystack" phenomenon. Even if the audit data could be

limited to the right amount, typically many legitimate accesses and perhaps one

attack will occur. Finding the one attack access out of a thousand legitimate

accesses can be difficult. A corollary to this problem is the one of

determining who or what does the analysis. Does the system administrator sit

and analyze all data in the audit log? Or do the developers write a program to

analyze the data? If the latter, how can we automatically recognize a pattern

of unacceptable behavior? These issues are open questions being addressed not

only by security specialists but also by experts in artificial intelligence and

pattern recognition.

Sidebar 5-5 illustrates how

the volume of audit log data can get out of hand very quickly. Some trusted

systems perform audit reduction, using separate tools to reduce the

volume of the audit data. In this way, if an event occurs, all the data have

been recorded and can be consulted directly. However, for most analysis, the

reduced audit log is enough to review.

Intrusion Detection

Closely related to audit

reduction is the ability to detect security lapses, ideally while they occur.

As we have seen in the State Department example, there may well be too much

information in the audit log for a human to analyze, but the computer can help

correlate independent data. Intrusion

detection software builds patterns of normal system usage, triggering an

alarm any time the usage seems abnormal. After a decade of promising research

results in intrusion detection, products are now commercially available. Some

trusted operating systems include a primitive degree of intrusion detection

software. See Chapter 7 for a more

detailed description of intrusion detection systems.

Although the problems are daunting, there have

been many successful implementations of trusted operating systems. In the

following section, we examine some of them. In particular, we consider three

properties: kernelized design (a result of least privilege and economy of

mechanism), isolation (the logical extension of least common mechanism), and

ring-structuring (an example of open design and complete mediation).

Sidebar

5-5: Theory vs. Practice: Audit Data Out of Control

In the 1980s, the U.S. State Department

was enhancing the security of the automated systems that handled diplomatic

correspondence among its embassies worldwide. One of the security requirements

for an operating system enhancement requested an audit log of every transaction

related to protected documents. The requirement included the condition that the

system administrator was to review the audit log daily, looking for signs of

malicious behavior.

In theory, this requirement was sensible,

since revealing the contents of protected documents could at least embarrass

the nation, even endanger it. But, in fact, the requirement was impractical.

The State Department ran a test system with five users, printing out the audit

log for ten minutes. At the end of the test period, the audit log generated a

stack of paper more than a foot high! Because the actual system involved

thousands of users working around the clock, the test demonstrated that it

would have been impossible for the system administrator to review the logeven

if that were all the system administrator had to do every day.

The State

Department went on to consider other options for detecting malicious behavior,

including audit log reduction and automated review of the log's contents.

Related Topics