Chapter: Security in Computing : Designing Trusted Operating Systems

Layered Design

Layered Design

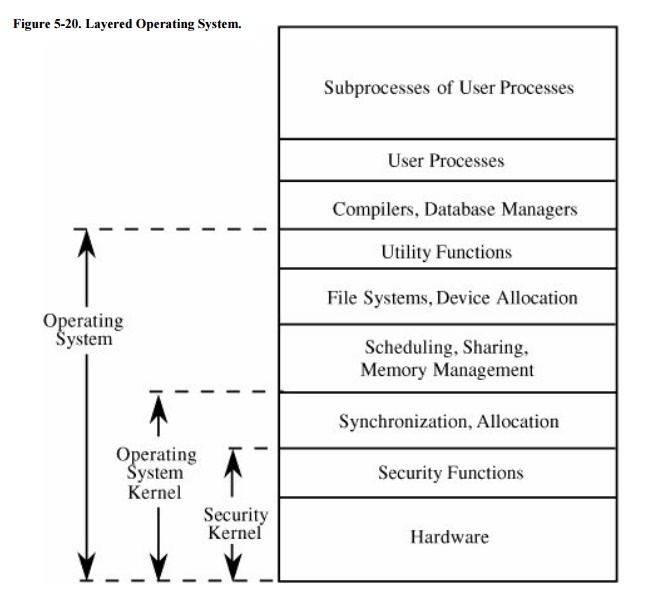

As described previously, a kernelized operating

system consists of at least four levels: hardware, kernel, operating system,

and user. Each of these layers can include sublayers. For example, in [SCH83b], the kernel has five distinct layers. At

the user level, it is not uncommon to have quasi system programs, such as

database managers or graphical user interface shells, that constitute separate

layers of security themselves.

Layered

Trust

As we have seen earlier in this chapter (in Figure 5-15), the layered view of a secure

operating system can be depicted as a series of concentric circles, with the

most sensitive operations in the innermost layers. Then, the trustworthiness

and access rights of a process can be judged by the process's proximity to the

center: The more trusted processes are closer to the center. But we can also

depict the trusted operating system in layers as a stack, with the security

functions closest to the hardware. Such a system is shown in Figure 5-20.

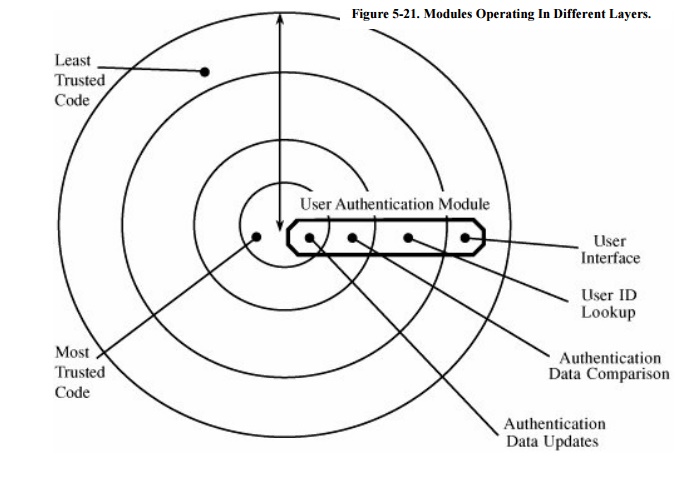

In this design, some activities related to

protection functions are performed outside the security kernel. For example,

user authentication may include accessing a password table, challenging the

user to supply a password, verifying the correctness of the password, and so

forth. The disadvantage of performing all these operations inside the security

kernel is that some of the operations (such as formatting the userterminal

interaction and searching for the user in a table of known users) do not

warrant high security.

Alternatively, we can implement a single logical function in

several different modules; we call this a layered design. Trustworthiness and

access rights are the basis of the layering. In other words, a single function

may be performed by a set of modules operating in different layers, as shown in

Figure 5-21. The modules of each layer

perform operations of a certain degree of sensitivity.

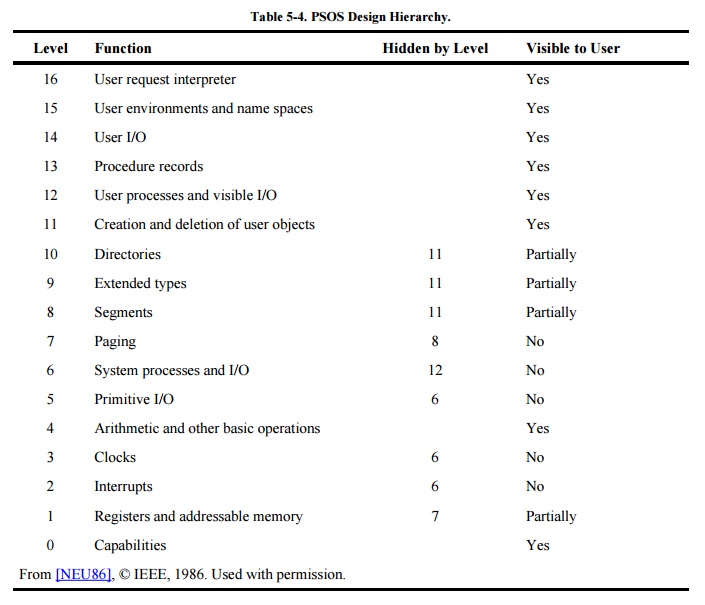

Neumann [NEU86] describes

the layered structure used for the Provably Secure Operating System (PSOS). As

shown in Table 5-4, some lower-level

layers present some or all of their functionality to higher levels, but each

layer properly encapsulates those things below itself.

A layered approach is another way to achieve

encapsulation, discussed in Chapter 3.

Layering is recognized as a good operating system design. Each layer uses the

more central layers as services, and each layer provides a certain level of

functionality to the layers farther out. In this way, we can "peel

off" each layer and still have a logically complete system with less

functionality. Layering presents a good example of how to trade off and balance

design characteristics.

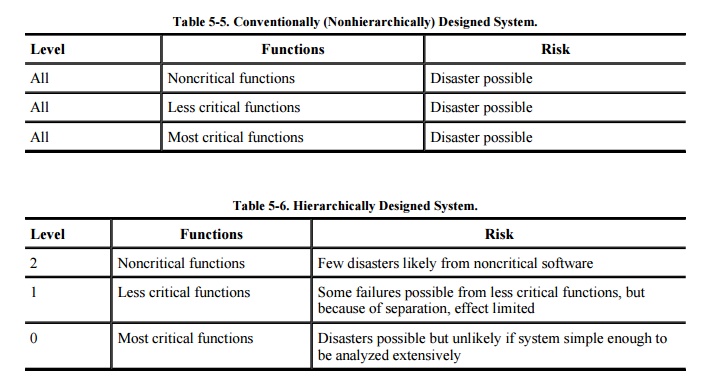

Another justification for layering is damage control. To see why,

consider Neumann's [NEU86] two examples

of risk, shown in Tables 5-5 and 5-6. In a conventional, nonhierarchically

designed system (shown in Table 5-5),

any problemhardware failure, software flaw, or unexpected condition, even in a

supposedly non-security-relevant portioncan cause disaster because the effect

of the problem is unbounded and because the system's design means that we

cannot be confident that any given function has no (indirect) security effect.

By contrast, as shown in Table 5-6, hierarchical structuring has two benefits:

Hierarchical structuring permits identification

of the most critical parts, which can then be analyzed intensely for

correctness, so the number of problems should be smaller.

Isolation limits effects of problems to the

hierarchical levels at and above the point of the problem, so the effects of

many problems should be confined.

These design propertiesthe kernel, separation,

isolation, and hierarchical structurehave been the basis for many trustworthy

system prototypes. They have stood the test of time as best design and

implementation practices. (They are also being used in a different form of

trusted operating system, as described in Sidebar

5-6.)

In the next section, we look at what gives us confidence in an

operating system's security.

Sidebar

5-6: An Operating System for the Untrusting

The U.K. Regulation of Investigatory

Powers Act (RIPA) was intended to broaden government surveillance capabilities,

but privacy advocates worry that it can permit too much government

eavesdropping.

Peter Fairbrother, a British

mathematician, is programming a new operating system he calls M-o-o-t to keep

the government at bay by carrying separation to the extreme. As described in

The New Scientist [KNI02], Fairbrother's design has all sensitive data

stored in encrypted form on servers outside the U.K. government's jurisdiction.

Encrypted communications protect the file transfers from server to computer and

back again. Each encryption key is used only once and isn't known by the user.

Under RIPA, the government will have the power to require any user to produce

the key for any message that user has encrypted. But if the user does not know

the key, the user cannot surrender it.

Fairbrother

admits that in the wrong hands M-o-o-t could benefit criminals, but he thinks

the personal privacy benefits outweigh

this harm.

Related Topics