Chapter: Security in Computing : Designing Trusted Operating Systems

Kernelized Design

Kernelized Design

A kernel

is the part of an operating system that performs the lowest-level functions. In

standard operating system design, the kernel implements operations such as

synchronization, interprocess communication, message passing, and interrupt

handling. The kernel is also called a nucleus

or core. The notion of designing an

operating system around a kernel is described by Lampson and Sturgis [LAM76] and by Popek and Kline [POP78].

A security

kernel is responsible for enforcing the security mechanisms of the entire

operating system. The security kernel provides the security interfaces among

the hardware, operating system, and other parts of the computing system.

Typically, the operating system is designed so that the security kernel is

contained within the operating system kernel. Security kernels are discussed in

detail by Ames [AME83].

There are several good design reasons why

security functions may be isolated in a security kernel.

· Coverage. Every access to a protected object

must pass through the security kernel. In a system designed in this way, the

operating system can use the security kernel to ensure that every access is

checked.

· Separation. Isolating security mechanisms both

from the rest of the operating system and from the user space makes it easier

to protect those mechanisms from penetration by the operating system or the

users.

· Unity. All security functions are performed by

a single set of code, so it is easier to trace the cause of any problems that

arise with these functions.

· Modifiability. Changes to the security

mechanisms are easier to make and easier to test.

· Compactness. Because it performs only security

functions, the security kernel is likely to be relatively small.

· Verifiability. Being relatively small, the

security kernel can be analyzed rigorously. For example, formal methods can be

used to ensure that all security situations (such as states and state changes)

have been covered by the design.

Notice the similarity between these advantages

and the design goals of operating systems that we described earlier. These

characteristics also depend in many ways on modularity, as described in Chapter 3.

On the other hand,

implementing a security kernel may degrade system performance because the

kernel adds yet another layer of interface between user programs and operating

system resources. Moreover, the presence of a kernel does not guarantee that it

contains all security functions or that it has been implemented correctly. And

in some cases a security kernel can be quite large.

How do we balance these

positive and negative aspects of using a security kernel? The design and

usefulness of a security kernel depend somewhat on the overall approach to the

operating system's design. There are many design choices, each of which falls

into one of two types: Either the kernel is designed as an addition to the

operating system, or it is the basis of the entire operating system. Let us

look more closely at each design choice.

Reference Monitor

The most important part of a security kernel is

the reference monitor, the portion

that controls accesses to objects [AND72,

LAM71]. A reference monitor is not

necessarily a single piece of code; rather, it is the collection of access

controls for devices, files, memory, interprocess communication, and other

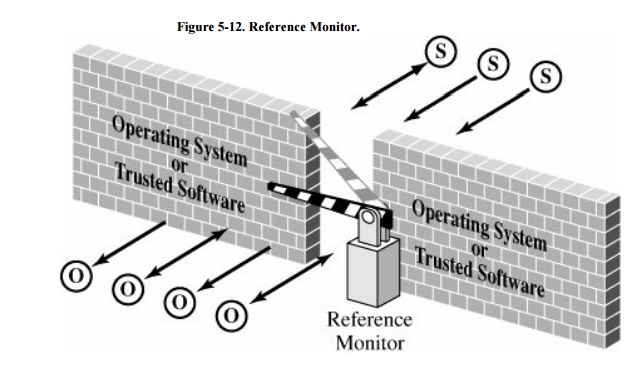

kinds of objects. As shown in Figure 5-12,

a reference monitor acts like a brick wall around the operating system or

trusted software.

A reference monitor must be

tamperproof, that is, impossible to weaken or disable

unbypassable, that is, always invoked when access to any object is required

analyzable, that is, small enough to be subjected to analysis and testing,

the completeness of which can be ensured

A reference monitor can

control access effectively only if it cannot be modified or circumvented by a

rogue process, and it is the single point through which all access requests

must pass. Furthermore, the reference monitor must function correctly if it is

to fulfill its crucial role in enforcing security. Because the likelihood of

correct behavior decreases as the complexity and size of a program increase,

the best assurance of correct policy enforcement is to build a small, simple,

understandable reference monitor.

The reference monitor is not

the only security mechanism of a trusted operating system. Other parts of the

security suite include audit, identification, and authentication processing, as

well as the setting of enforcement parameters, such as who the allowable

subjects are and which objects they are allowed to access. These other security

parts interact with the reference monitor, receiving data from the reference

monitor or providing it with the data it needs to operate.

The reference monitor concept

has been used for many trusted operating systems and also for smaller pieces of

trusted software. The validity of this concept is well supported both in

research and in practice.

Trusted Computing Base

The trusted computing base,

or TCB, is the name we give to everything in the trusted operating system

necessary to enforce the security policy. Alternatively, we say that the TCB

consists of the parts of the trusted operating system on which we depend for

correct enforcement of policy. We can think of the TCB as a coherent whole in

the following way. Suppose you divide a trusted operating system into the parts

that are in the TCB and those that are not, and you allow the most skillful

malicious programmers to write all the non-TCB parts. Since the TCB handles all

the security, there is nothing the malicious non-TCB parts can do to impair the

correct security policy enforcement of the TCB. This definition gives you a

sense that the TCB forms the fortress-like shell that protects whatever in the

system needs protection. But the analogy also clarifies the meaning of trusted

in trusted operating system: Our trust in the security of the whole system

depends on the TCB.

It is easy to see that it is

essential for the TCB to be both correct and complete. Thus, to understand how

to design a good TCB, we focus on the division between the TCB and non-TCB

elements of the operating system and spend our effort on ensuring the

correctness of the TCB.

TCB Functions

Just what constitutes the

TCB? We can answer this question by listing system elements on which security

enforcement could depend:

z hardware, including

processors, memory, registers, and I/O devices

some notion of processes, so

that we can separate and protect security-critical processes

primitive files, such as the

security access control database and identification/authentication data

protected memory, so that the

reference monitor can be protected against tampering

some interprocess

communication, so that different parts of the TCB can pass data to and activate

other parts. For example, the reference monitor can invoke and pass data

securely to the audit routine.

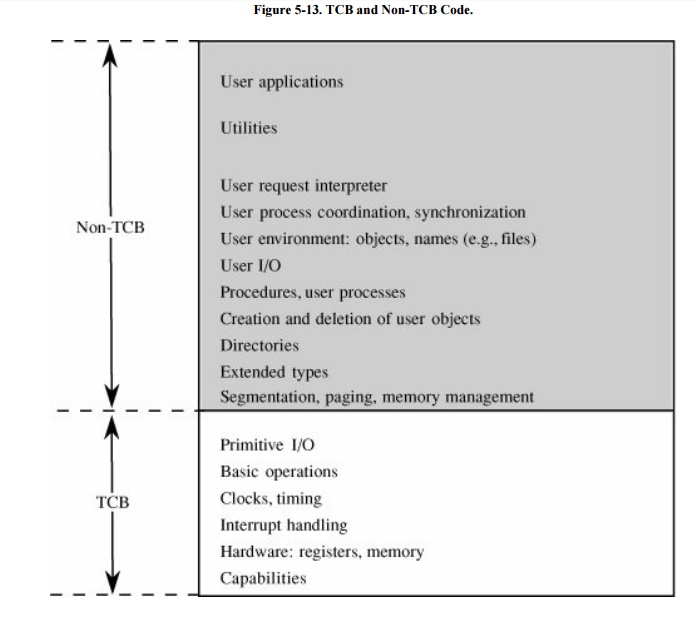

It may seem as if this list encompasses most of

the operating system, but in fact the TCB is only a small subset. For example,

although the TCB requires access to files of enforcement data, it does not need

an entire file structure of hierarchical directories, virtual devices, indexed

files, and multidevice files. Thus, it might contain a primitive file manager

to handle only the small, simple files needed for the TCB. The more complex

file manager to provide externally visible files could be outside the TCB. Figure 5-13 shows a typical division into TCB and

non-TCB sections.

The TCB, which must maintain

the secrecy and integrity of each domain, monitors four basic interactions.

Process activation. In a multiprogramming environment, activation

and deactivation of processes occur frequently. Changing from one process to another requires a

complete change of registers, relocation maps, file access lists, process

status information, and other pointers, much of which is security-sensitive

information.

Execution domain switching. Processes running in one domain often invoke processes

in other domains to obtain more sensitive

data or services.

Memory protection. Because each domain includes

code and data stored in memory, the TCB must monitor memory references to ensure secrecy and integrity for each

domain.

I/O operation. In some

systems, software is involved with each character transferred in an I/O

operation. This software connects a user program in the outermost domain to an

I/O device in the innermost (hardware) domain. Thus, I/O operations can cross

all domains.

TCB Design

The division of the operating

system into TCB and non-TCB aspects is convenient for designers and developers

because it means that all security-relevant code is located in one (logical)

part. But the distinction is more than just logical. To ensure that the

security enforcement cannot be affected by non-TCB code, TCB code must run in

some protected state that distinguishes it. Thus, the structuring into TCB and

non-TCB must be done consciously. However, once this structuring has been done,

code outside the TCB can be changed at will, without affecting the TCB's

ability to enforce security. This ability to change helps developers because it

means that major sections of the operating systemutilities, device drivers,

user interface managers, and the likecan be revised or replaced any time; only

the TCB code must be controlled more carefully. Finally, for anyone evaluating

the security of a trusted operating system, a division into TCB and non-TCB

simplifies evaluation substantially because non-TCB code need not be

considered.

TCB Implementation

Security-related activities

are likely to be performed in different places. Security is potentially related

to every memory access, every I/O operation, every file or program access,

every initiation or termination of a user, and every interprocess

communication. In modular operating systems, these separate activities can be

handled in independent modules. Each of these separate modules, then, has both

security-related and other functions.

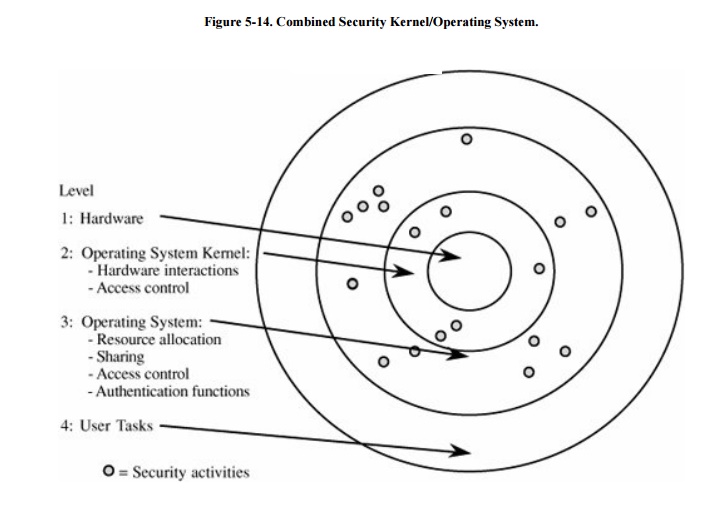

Collecting all security functions into the TCB

may destroy the modularity of an existing operating system. A unified TCB may

also be too large to be analyzed easily. Nevertheless, a designer may decide to

separate the security functions of an existing operating system, creating a

security kernel. This form of kernel is depicted in Figure

5-14.

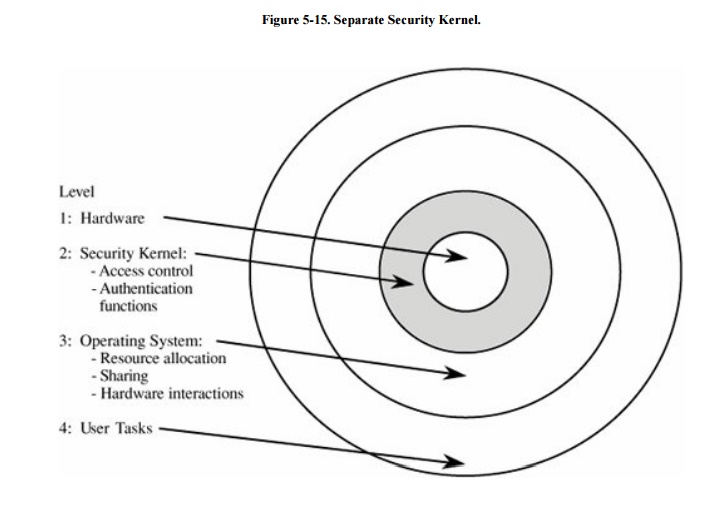

A more sensible approach is

to design the security kernel first and then design the operating system around

it. This technique was used by Honeywell in the design of a prototype for its

secure operating system, Scomp. That system contained only twenty modules to

perform the primitive security functions, and it consisted of fewer than 1,000

lines of higher-level-language source code. Once the actual security kernel of

Scomp was built, its functions grew to contain approximately 10,000 lines of

code.

In a security-based design, the security kernel forms an interface

layer, just atop system hardware. The security kernel monitors all operating

system hardware accesses and performs all protection functions. The security

kernel, which relies on support from hardware, allows the operating system

itself to handle most functions not related to security. In this way, the

security kernel can be small and efficient. As a byproduct of this

partitioning, computing systems have at least three execution domains: security

kernel, operating system, and user. See Figure 5-15.

Related Topics