Chapter: Psychology: Personality

Key Social-Cognitive Concepts

Key Social-Cognitive Concepts

Social-cognitive

theorists differ in focus and emphasis, but across theorists, three con-cepts

play a crucial role. These are control,

attributional style (which refers to

how we typically explain the things that happen in our lives), and self-control. We take up each of these

in turn.

CONTROL

There

is a great deal of evidence that people desire control over the circumstances

of their life and benefit from feeling that they have such control.

A

widely cited illustration involves elderly people in a nursing home. Patients

on one floor of the nursing home were given small houseplants to care for, and

they were also asked to choose the time at which they wanted to participate in

some of the nursing-home activities (e.g., visiting friends, watching

television, planning social events). Patients on another floor were also given

plants but with the understanding that the plants would be tended by the staff.

They participated in the same activities as the first group of patients, but at

times chosen by the staff. The results were clear-cut. According to both

nurses’ and the patients’ own reports, the patients who tended their own

houseplants and scheduled their own activities were more active and felt better

than the patients who lacked this con-trol, a difference that was still

apparent a year later (Langer & Rodin, 1976; Rodin & Langer, 1977;

Figure 15.31).

Having

control means being able to make choices, and so, if having control is a good

thing, then having choices would seem to be a good thing too, and the more the

better. This line of reasoning is certainly consistent with the way various

goods and services are marketed—with both retail stores and large corporations

offering us dozens of options whenever we order a latte, buy a new cell phone,

select an insurance plan, or go on a vaca-tion. However, in a series of

studies, Sheena Iyengar and Mark Lepper (2000) found that there is such a thing

as too much choice. One of these studies was conducted in a gour-met food

store, where the researchers created displays featuring fancy jams that

customers could taste. Some displays had 6 kinds of jam to taste. Other

displays had 24 kinds of jam to taste. Although people flocked to the larger

display, it turned out that people were actually 10 times as likely to purchase

a jam they had tasted if they had seen the smaller display as compared to the

larger display. Reviewing this and related studies, Barry Schwartz (2004)

concluded that although having some choice (and hence con-trol) is extremely

important, having too much choice leads to greater stress and anxiety rather

than greater pleasure. These findings indicate there can be too much of an

appar-ently good thing, and suggest that other considerations (such as managing

the anxiety occasioned by having too many choices) may under some circumstances

lead us to prefer lesser rather than greater control (see also Shah &

Wolford, 2007).

ATTRIBUTIONALSTYLE

Control

beliefs are usually forward looking: Which jam will I buy? When will I visit my

friends? A different category of beliefs, in contrast, is oriented toward the

past and—more specifically—concerns people’s explanations for the events they

have experienced.

In

general, people tend to offer dispositional attributions for their own

successes (“I did well on the test because I’m smart”), but situational

attributions for their fail-ures (“My company is losing money because of the

downturn in the economy”; “I lost the match because the sun was in my eyes”).

This pattern is obviously self-serving, and has been documented in many Western

cultures (Bradley, 1978). In most studies, par-ticipants perform various

tasks—ranging from alleged measures of motor skills to tests of social

sensitivity—and then receive fake feedback on whether they had achieved some

criterion of success (see, for example, Luginbuhl, Crowe, & Kahan, 1975; D.

T. Miller, 1976; Sicoly & Ross, 1977; M. L. Snyder, Stephan, & Rosenfield,

1976; Stevens & Jones, 1976). The overall pattern of results is always the

same: By and large, the participants attribute their successes to internal

factors (they were pretty good at such tasks, and they worked hard) and their

failures to external factors (the task was too difficult, and they were

unlucky).

The

same pattern is evident outside the laboratory. One study analyzed the

com-ments of football and baseball players following important games, as

published in newspapers. Of the statements made by the winners, 80% were

internal attributions: “Our team was great,” “Our star player did it all,” and

so on. In contrast, only 53% of the losers gave internal attributions, and they

often explained the outcomes by referring to external, situational factors: “I

think we hit the ball all right. But I think we’re unlucky”.

These

attributions are offered, of course, after an event—after the team has won or

lost, or after the business has succeeded or failed. But a related pattern

arises before an event, allowing

people to protect themselves against failure and disappoint-ment. One such

strategy is known as self-handicapping,

in which one arranges an obstacle to one’s own performance. This way, if

failure occurs, it will be attributed to the obstacle and not to one’s own limitations

(Higgins, Snyder, & Berglas, 1990; Jones & Berglas, 1978). Thus, if

Julie is afraid of failing next week’s biology exam, she might spend more time

than usual watching television. Then, if she fails the exam, the obvious

interpretation will be that she did not study hard enough, rather than that she

is stupid.

Not

surprisingly, all these forms of self-protection are less likely in

collectivistic cultures—that is, among people who are not motivated to view

themselves as differ-ent from and better than others. But there is some

subtlety to the cultural patterning. A recent analysis of the self-serving bias

found that the Japanese and Pacific Islanders showed no self-serving biases,

Indians displayed a moderate bias, and Chinese and Koreans showed large

self-serving biases. It is not clear why these differences arose, and

explaining this point is a fruitful area for future research. In the meantime,

though, this analysis reminds us of the important point that there is a great

deal of variation within collectivistic, interdependent cultures.

Even

within a given culture, there are notable differences in attributional style, the way a person typically explains the things

that happen in his or her life (Figure 15.32). This style can be measured by a

specially constructed attributional-style questionnaire (ASQ) in which a

participant is asked to imagine himself in a number of situations (e.g.,

failing a test) and to indicate what would have caused those events if they had

happened to him (Dykema, Bergbower, Doctora, & Peterson, 1996; C. Peterson

& Park, 1998; C. Peterson et al., 1982). His responses on the ASQ reveal

how he explains his failure. He may think he did not study enough (an internal

cause) or that the teacher

misled

him about what to study (an external cause). He may think he is generally

stu-pid (a global explanation) or is stupid on just that test material (a

specific explanation). Finally, he may believe he is always bound to fail (a

stable explanation) or that with a little extra studying he can recover nicely

(an unstable explanation).

Differences

in attributional style matter (Peterson & Park, 2007). More specifically,

greater use of external, specific, and unstable attributions for failure

predicts important outcomes ranging from performance in insurance sales

(Seligman & Schulman, 1986) to success in competitive sports (Seligman,

Nolen-Hoeksema, Thornton, & Thornton, 1990). Importantly, variation in

attributional style has also been shown to predict the onset of some forms of

psychopathology, such as whether a person is likely to suffer from depression .

Specifically, being prone to depression is correlated with a particular

attributional style—a tendency to attribute unfortunate events to causes that

are internal, global, and stable. Thus, a person who is prone to depression is

likely to attribute life events to causes related to something within her that

applies tomany other situations and will continue indefinitely (G. M. Buchanan &

Seligman, 1995; C. Peterson & Seligman, 1984; C. Peterson & Vaidya,

2001; Seligman & Gillham, 2000).

SELF - CONTROL

So

far we have emphasized each person’s control over his life circumstances, but

just as important for the social-cognitive theorists is the degree of control

each person has over himself and his own actions. Surely people differ in this

regard, and these differ-ences in self-control

are visible in various ways (Figure 15.33). People differ in their ability to

do what they know they should—whether the issue is being polite to an obnoxious

supervisor at work, or keeping focused despite the distraction of a talkative

roommate. They also differ in whether they can avoid doing what they want to do

but should not—for example, not joining friends for a movie the night before an

exam. People also differ from each other in their capacity to do things they

dislike in order to get what they want eventually—for example, studying extra

hard now in order to do well later in job or graduate school applications.

Examples

of self-control (and lack thereof ) abound in everyday life. Self-control is

manifested whenever we get out of bed in the morning, because we know we

should, even though we are still quite sleepy. It is also evident when we eat

an extra helping of dessert when we’re dieting (or don’t), or quit smoking (or

don’t). In each case, the abil-ity to control oneself is tied to what people

often call willpower, and, according

to popu-lar wisdom, some people have more willpower than others. But do they

really? That is, is “having a lot of willpower” a trait that is consistent over

time and across situations?

Walter

Mischel (1974, 1984) and his associates (Mischel, Shoda, & Rodriguez, 1992)

studied this issue in children. The participants in his studies were between

four and five years of age and were shown two snacks, one preferable to the

other (e.g., two marshmallows or pretzels versus one). To obtain the snack they

preferred, they had to wait about 15 minutes. If they did not want to wait, or

grew tired of waiting, they imme-diately received the less desirable treat but

had to forgo the more desirable one.

Whether

the children could manage the wait was powerfully influenced by what the

children did and thought about while they waited. If they looked at the

marsh-mallow, or worse, thought about eating it, they usually succumbed and

grabbed the lesser reward. But they were able to wait for the preferred snack

if they found (or were shown) some way of distracting their attention from it,

for example, by thinking of something fun, such as Mommy pushing them on a

swing. They could also wait for the preferred snack if they thought about the

snack in some way other than eating it, for example, by focusing on the

pretzels’ shape and color rather than on their crunchiness and taste. By

mentally transforming the desired object, the children managed to delay

gratification and thereby obtain the larger reward (Mischel, 1984; Mischel

& Baker, 1975; Mischel & Mischel, 1983; Mischel & Moore, 1980;

Rodriguez, Mischel, & Shoda, 1989).

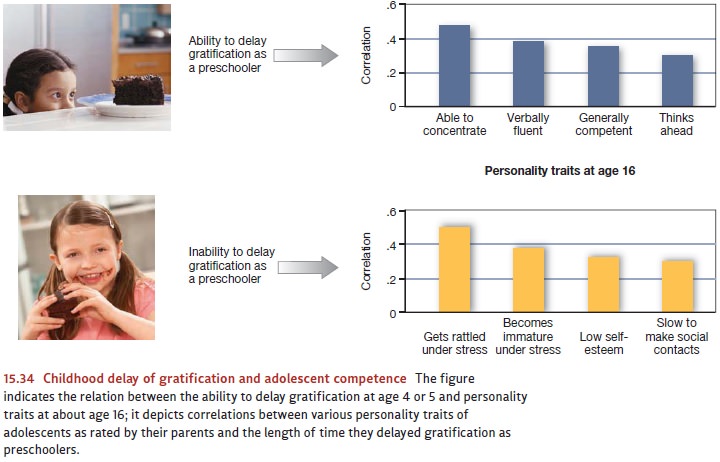

These

various findings show that whether a child delays gratification depends in part

on how she construes the situation. But it apparently also depends on some

qual-ities of the child herself. The best evidence comes from follow-up studies

that show remarkable correlations between children’s ability to delay

gratification at age 4 (e.g., the ability to wait for the two pretzels) and

some of their personality characteristics a decade or more later (Figure

15.34). Being able to tolerate lengthy delays of gratification in early

childhood predicts both academic and social competence in adolescence (as rated

by the child’s parents) and general coping ability. When compared to the

children who could not delay gratification, those who could were judged (as

young teenagers) to be more articulate, attentive, self-reliant, able to plan

and think ahead, academically competent, and resilient under stress (Eigsti et

al., 2006; Mischel, Shoda, & Peake, 1988; Shoda, Mischel, & Peake,

1990).

Why should a 4-year-old’s ability to wait 15 minutes to get two pretzels rather than one predict such important qualities as academic and social competence 10 years later? The answer probably lies in the fact that the cognitive processes that underlie this deceptively simple task in childhood are the same ones needed for success in adoles-cence and adulthood. After all, success in school often requires that short-term goals (e.g., partying during the week) be subordinated to long-term purposes (e.g., getting good grades). The same holds for social relations, because someone who gives in to every momentary impulse will be hard-pressed to keep friends, sustain commitments, or participate in team sports. In both academic and social domains, reaching any long-term goal inevitably means renouncing lesser goals that beckon in the interim.

If

there is some general capacity for delaying gratification, useful for child and

adult alike, where does it originate? As we saw, intelligence has a heritable

component, and the executive control processes that support delaying

gratification also may have a heritable component. It seems likely, however,

that there is also a large learned component. Children may acquire certain

cognitive skills (e.g., self-distraction, reevaluating rewards, and sustaining

attention to distant goals) that they continue to apply and improve upon as

they get older. However they originate, though, these atten-tion-diverting

strategies appear to emerge in the first two years of life and can be seen in

the child’s attachment behavior with the mother. In one study, toddlers were

observed during a brief separation from their mothers, using a variant of the

Strange Situation task already discussed. Some of the toddlers showed immediate

distress, while others distracted themselves with other activities. Those who

engaged in self-distraction as toddlers were able to delay gratification longer

when they reached the age of 5 (Sethi, Mischel, Aber, Shoda, & Rodriguez,

2000).

But

what if one didn’t develop these valuable self-regulatory capacities and skills

by early childhood? Is one simply out of luck? Proponents of a social cognitive

perspective would argue not, pointing to studies in which self-regulatory

ability was enhanced by simple changes in how individuals construed the

situation in which they found themselves. In one study, for example, Magen and

Gross (2007) asked participants to solve simple math problems. What made this

task difficult was that a hilarious comedy routine was playing loudly on a

television just beside the monitor on which the math problems were being presented.

Participants were instructed to do their best, and they understood that they

could win a prize if they had a particularly good math score. Even though they

were motivated to do well, participants peeked at the television show even

though this hurt their performance on the test. Then, halfway through the math

test, one group of test-takers was simply reminded to do as well as they could

on the second half of the math test. The second group got the same reminder,

but in addition, they were told that they might find it helpful to think of the

math test as a test of their willpower. Because these participants highly

valued their willpower, this provided an extra incentive for them to stay

focused on their task. This simple reconstrual of the math test context as a

test of their willpower led them to peek less often during the sec-ond half of

the math test than did the participants in the other instructional group.

Related Topics