Definition, Characteristic | Statistical Inference - Estimation | 12th Business Maths and Statistics : Chapter 8 : Sampling Techniques and Statistical Inference

Chapter: 12th Business Maths and Statistics : Chapter 8 : Sampling Techniques and Statistical Inference

Estimation

Estimation:

It is possible to draw

valid conclusion about the population parameters from sampling distribution.

Estimation helps in estimating an unknown population parameter such as

population mean, standard deviation, etc., on the basis of suitable statistic

computed from the samples drawn from population.

Estimation:

Definition 8.4

The method of

obtaining the most likely value of the population parameter using statistic is

called estimation.

Estimator:

Definition 8.5

Any sample statistic

which is used to estimate an unknown population parameter is called an

estimator ie., an estimator is a sample statistic used to estimate a population

parameter.

Estimate:

Definition 8.6

When we observe a specific

numerical value of our estimator, we call that value is an estimate. In other

words, an estimate is a specific observed value of a statistic.

Characteristic of a good estimator

A good estimator must

possess the following characteristic:

(i) Unbiasedness

(ii) Consistency

(iii) Efficiency

(iv) Sufficiency.

i. Unbiasedness: An

estimator Tn =T(x1 , x2 ,...., xn

) is said to be an unbiased estimator of γ (θ ) if E (Tn ) = γ (θ) , for all θ ε θ (parameter space),

(i.e)An estimator is said to be unbiased if its expected value is equal to the

population parameter. Example: E (![]() ) = μ

) = μ

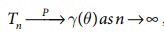

ii. Consistency: An

estimator Tn =T(x1 , x2 ,...., xn

) is said to be consistent estimator of γ (θ ) if Tn converges

to γ θ( ) in Probability, i.e.,  for all θ ε Ѳ .

for all θ ε Ѳ .

iii. Efficiency:If T1

is the most efficient estimator with variance V1 and T2

is any other estimator with variance V2 , then the efficiency

E of T2 is defined as E=V1/V2. Obviously,

E cannot exceed unity.

iv. Sufficiency:

If T = t(x1

, x2 ,...., xn ) is an estimator of a

parameter θ , based on a sample

x1 , x2 ,...., xn

of size n from the population with density f (x, θ) such that the

conditional distribution of x1 , x2 ,...., xn

given T , is independent of q , then T is sufficient estimator for

θ.

Related Topics