Chapter: Computer Networks : Application Layer

Multimedia

Multimedia

Recent

advances in technology have changed our use of audio and video. In the past, we

listened to an audio broadcast through a radio and watched a video program

broadcast through a TV. We used the telephone network to interactively

communicate with another party. But times have changed. People want to use the

Internet not only for text and image communications, but also for audio and

video services.

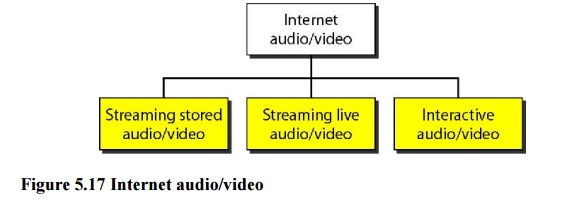

We can

divide audio and video services into three broad categories:

1.

Streaming stored audio/video

2.

Streaming live audio/video

3.

Interactive audio/video

1. Streaming stored audio/video, the

files are compressed and stored on a server. A clientdownloads the files

through the Internet.

2. Streaming live audio/video, a user

listens to broadcast audio and video through the Internet.

3. Interactive audio/video, people

use the Internet to interactively communicate with oneanother.

1. Digitizing Audio and Video

Before

audio or video signals can be sent on the Internet, they need to be digitized.

Digitizing Audio

When

sound is fed into a microphone, an electronic analog signal is generated which

represents the sound amplitude as a function of time. The signal is called an

analog audio signal. An analog signal, such as audio, can be digitized to

produce a digital signal. According to the Nyquist theorem, if the highest frequency

of the signal is f, we need to sample the signal 21 times per second. There are

other methods for digitizing an audio signal, but the principle is the same.

Digitizing Video

A video

consists of a sequence of frames. If the frames are displayed on the screen

fast enough, we get an impression of motion. The reason is that our eyes cannot

distinguish the rapidly flashing frames as individual ones. There is no

standard number of frames per second; in North America 25 frames per second is

common. However, to avoid a condition known as flickering, a frame needs to be

refreshed. The TV industry repaints each frame twice. This means 50 frames need

to be sent, or if there is memory at the sender site, 25 frames with each frame

repainted from the memory.

2. Audio and Video Compression

To send

audio or video over the Internet requires compression

Audio Compression

Audio

compression can be used for speech or music. For speech, we need to compress a

64-kHz digitized signal; for music, we need to compress a 1.41 I-MHz signal.

Two categories of techniques are used for audio compression: predictive

encoding and perceptual encoding.

a. Predictive Encoding

In

predictive encoding, the differences between the samples are encoded instead of

encoding all the sampled values. This type of compression is normally used for

speech. Several standards have been defined such as GSM (13 kbps), G.729 (8

kbps), and G.723.3 (6.4 or 5.3 kbps).

b. Perceptual Encoding: MP3

The most

common compression technique that is used to create CD-quality audio is based

on the perceptual encoding technique. As we mentioned before, this type of

audio needs at least 1.411 Mbps; this cannot be sent over the Internet without

compression. MP3 (MPEG audio layer 3), a part of the MPEG standard (discussed

in the video compression section), uses this technique.

Video Compression

As we

mentioned before, video is composed of multiple frames. Each frame is one

image. We can compress video by first compressing images. Two standards are

prevalent in the market. Joint Photographic Experts Group (JPEG) is used to

compress images. Moving Picture Experts Group (MPEG) is used to compress video.

a. Image Compression: JPEG

If the

picture is not in color (gray scale) then each pixel can be represented by an

8-bit integer (256 levels). If the picture is in color, each pixel can be

represented by 24 bits (3 x 8 bits), with each 8 bits representing red, blue,

or green (RBG). To simplify the discussion, we concentrate on a gray scale

picture.

b. Video Compression: MPEG

The

Moving Picture Experts Group method is used to compress video. In principle, a

motion picture is a rapid flow of a set of frames, where each frame is an

image. In other words, a frame is a spatial combination of pixels, and a video

is a temporal combination of frames that are sent one after another.

Compressing video, then, means spatially compressing each frame and temporally

compressing a set of frames.

3. Streaming Live Audio/video

Streaming

live audio/video is similar to the broadcasting of audio and video by radio and

TV stations. Instead of broadcasting to the air, the stations broadcast through

the Internet. There are several similarities between streaming stored

audio/video and streaming live audio/video. They are both sensitive to delay;

neither can accept retransmission. However, there is a difference. In the first

application, the communication is unicast and on-demand. In the second, the

communication is multicast and live. Live streaming is better suited to the

multicast services of IP and the use of protocols such as UDP and RTP.

4. Real-Time Interactive

Audio/video

In

real-time interactive audio/video, people communicate with one another in real

time. The Internet phone or voice over IP is an example of this type of

application. Video conferencing is another example that allows people to

communicate visually and orally.

Related Topics