Chapter: Compilers : Principles, Techniques, & Tools : Run-Time Environments

Introduction to Garbage Collection

Introduction to Garbage

Collection

1 Design Goals for Garbage

Collectors

2 Reachability

3 Reference Counting Garbage

Collectors

4 Exercises for Section 7.5

Data that cannot be referenced is generally known as garbage. Many high-level programming

languages remove the burden of manual memory management from the programmer by

offering automatic garbage collection, which deallo-cates unreachable data.

Garbage collection dates back to the initial implemen-tation of Lisp in 1958.

Other significant languages that offer garbage collection include Java, Perl,

ML, Modula-3, Prolog, and Smalltalk.

In this section, we introduce many of the concepts of garbage

collection. The notion of an object being "reachable" is perhaps

intuitive, but we need to be precise; the exact rules are discussed in Section

7.5.2. We also discuss, in Section 7.5.3, a simple, but imperfect, method of

automatic garbage collection: reference counting, which is based on the idea

that once a program has lost all references to an object, it simply cannot and

so will not reference the storage.

Section 7.6 covers trace-based collectors, which are algorithms that

discover all the objects that are still useful, and then turn all the other

chunks of the heap into free space.

1. Design Goals for Garbage

Collectors

Garbage collection is the reclamation of chunks of storage holding

objects that can no longer be accessed by a program. We need to assume that

objects have a type that can be determined by the garbage collector at run

time. From the type information, we can tell how large the object is and which

components of the object contain references (pointers) to other objects. We

also assume that references to objects are always to the address of the

beginning of the object, never pointers to places within the object. Thus, all

references to an object have the same value and can be identified easily.

A user program, which we shall refer to as the mutator, modifies the col-lection of objects in the heap. The

mutator creates objects by acquiring space from the memory manager, and the

mutator may introduce and drop references to existing objects. Objects become

garbage when the mutator program cannot "reach" them, in the sense

made precise in Section 7.5.2. The garbage collector finds these unreachable

objects and reclaims their space by handing them to the memory manager, which

keeps track of the free space.

A Basic Requirement: T y p e

Safety

Not all languages are good candidates for automatic garbage collection.

For a garbage collector to work, it must be able to tell whether any given data

element or component of a data element is, or could be used as, a pointer to a

chunk of allocated memory space. A language in which the type of any data

component can be determined is said to be type

safe. There are type-safe languages like ML, for which we can determine

types at compile time. There are other type-safe languages, like Java, whose

types cannot be determined at compile time, but can be determined at run time.

The latter are called dynamically typed

languages. If a language is neither statically nor dynamically type safe, then

it is said to be unsafe.

Unsafe languages, which unfortunately include some of the most

impor-tant languages such as C and C + + , are bad candidates for automatic

garbage collection. In unsafe languages, memory addresses can be manipulated

arbi-trarily: arbitrary arithmetic operations can be applied to pointers to

create new pointers, and arbitrary integers can be cast as pointers. Thus a

program theoretically could refer to any location in memory at any time.

Consequently, no memory location can be considered to be inaccessible, and no

storage can ever be reclaimed safely.

In practice, most C and C + + programs do not generate pointers

arbitrarily, and a theoretically unsound garbage collector that works well

empirically has been developed and used. We shall discuss conservative garbage

collection for C and C + + in Section 7.8.3.

Performance Metrics

Garbage collection is often so expensive that, although it was invented

decades ago and absolutely prevents memory leaks, it has yet to be adopted by

many mainstream programming languages. Many different approaches have been

pro-posed over the years, and there is not one clearly best garbage-collection

algo-rithm. Before exploring the options, let us first enumerate the

performance metrics that must be considered when designing a garbage collector.

• Overall Execution Time. Garbage collection can be very slow. It is impor-tant that it not

significantly increase the total run time of an application. Since the garbage

collector necessarily must touch a lot of data, its perfor-mance is determined

greatly by how it leverages the memory subsystem.

• Space Usage. It is important that garbage collection avoid fragmentation and make the best use of the available

memory.

Pause Time. Simple garbage collectors are

notorious for causing pro-grams — the mutators — to pause suddenly for an

extremely long time, as garbage collection kicks in without warning. Thus,

besides minimiz-ing the overall execution time, it is desirable that the

maximum pause time be minimized. As an important special case, real-time

applications require certain computations to be completed within a time limit.

We must either suppress garbage collection while performing real-time tasks, or

restrict maximum pause time. Thus, garbage collection is seldom used in

real-time applications.

Program Locality. We cannot evaluate the speed of a

garbage collector solely by its

running time. The garbage collector controls the placement of data and thus

influences the data locality of the mutator program. It can improve a mutator's

temporal locality by freeing up space and reusing it; it can improve the

mutator's spatial locality by relocating data used together in the same cache

or pages.

Some of these design goals conflict with one another, and tradeoffs must

be made carefully by considering how programs typically behave. Also objects of

different characteristics may favor different treatments, requiring a collector

to use different techniques for different kinds of objects.

For example, the number of objects allocated is dominated by small

objects, so allocation of small objects must not incur a large overhead. On the

other hand, consider garbage collectors that relocate reachable objects. Relocation

is expensive when dealing with large objects, but less so with small objects.

As another example, in general, the longer we wait to collect garbage in

a trace-based collector, the larger the fraction of objects that can be

collected. The reason is that objects often "die young," so if we

wait a while, many of the newly allocated objects will become unreachable. Such

a collector thus costs less on the average, per unreachable object collected.

On the other hand, infrequent collection increases a program's memory usage,

decreases its data locality, and increases the length of the pauses.

In contrast, a reference-counting collector, by introducing a constant

over-head to many of the mutator's operations, can slow down the overall

execution of a program significantly. On the other hand, reference counting

does not cre-ate long pauses, and it is memory efficient, because it finds

garbage as soon as it is produced (with the exception of certain cyclic

structures discussed in Section 7.5.3).

Language design can also affect the characteristics of memory usage.

Some languages encourage a programming style that generates a lot of garbage.

For example, programs in functional or almost functional programming languages

create more objects to avoid mutating existing objects. In Java, all objects,

other than base types like integers and references, are allocated on the heap

and not the stack, even if their lifetimes are confined to that of one function

invocation. This design frees the programmer from worrying about the lifetimes

of variables, at the expense of generating more garbage. Compiler optimizations

have been developed to analyze the lifetimes of variables and allocate them on

the stack whenever possible.

2. Reachability

We refer to all the data that can be accessed directly by a program,

without having to dereference any pointer, as the root set For example, in Java

the root set of a program consists of all the static field members and all the

variables on its stack. A program obviously can reach any member of its root

set at any time. Recursively, any object with a reference that is stored in the

field members or array elements of any reachable object is itself reachable.

Reachability becomes a bit more complex when the program has been

op-timized by the compiler. First, a compiler may keep reference variables in

registers. These references must also be considered part of the root set.

Sec-ond, even though in a type-safe language programmers do not get to

manipulate memory addresses directly, a compiler often does so for the sake of

speeding up the code. Thus, registers in compiled code may point to the middle

of an object or an array, or they may contain a value to which an offset will

be applied to compute a legal address. Here are some things an optimizing

compiler can do to enable the garbage collector to find the correct root set:

• The compiler can restrict the invocation of garbage collection to only

certain code points in the program, when no "hidden" references

exist.

• The compiler can write out information that the garbage collector can

use to recover all the references, such as specifying which registers contain

references, or how to compute the base address of an object that is given an

internal address.

The compiler can assure that

there is a reference to the base address of all reachable objects whenever the

garbage collector may be invoked.

The set of reachable objects changes as a program executes. It grows as

new objects get created and shrinks as objects become unreachable. It is important

to remember that once an object becomes unreachable, it cannot become

reach-able again. There are four basic operations that a mutator performs to

change the set of reachable objects:

• Object Allocations. These are performed by the memory manager, which returns a reference to each newly allocated chunk of memory. This

oper-ation adds members to the set of reachable objects.

• Parameter Passing and Return

Values. References to

objects are passed from the actual input parameter to the

corresponding formal parameter, and from the returned result back to the

callee. Objects pointed to by these

references remain reachable.

• Reference Assignments.

Assignments of the form u = v, where u

and v are references, have two effects. First, u is now a reference to the object referred to by v. As long as u is reachable, the object it refers to is surely reachable.

Second, the original reference in u

is lost. If this reference is the last to some reachable object, then that

object becomes unreachable. Any time an object becomes unreachable, all objects

that are reachable only through references contained in that object also become

unreachable.

• Procedure Returns. As a procedure exits, the frame holding its local variables is popped off the stack. If the frame holds the only

reachable reference to any object, that object becomes unreachable. Again, if

the now unreachable objects hold the only references to other objects, they too

become unreachable, and so on.

In summary, new objects are introduced through object allocations.

Param-eter passing and assignments can propagate reachability; assignments and

ends of procedures can terminate reachability. As an object becomes

unreachable, it can cause more objects to become unreachable.

There are two basic ways to find unreachable objects. Either we catch

the transitions as reachable objects turn unreachable, or we periodically

locate all the reachable objects and then infer that all the other objects are

unreachable. Reference counting, introduced

in Section 7.4.5, is a well-known approximation to the first approach. We

maintain a count of the references to an object, as the mutator performs

actions that may change the reachability set. When the count goes to zero, the

object becomes unreachable. We discuss this approach in more detail in Section

7.5.3.

The second approach computes reachability by tracing all the references

transitively. A trace-based garbage

collector starts by labeling ("marking") all objects in the root set

as "reachable," examines iteratively all the references in reachable

objects to find more reachable objects, and labels them as such. This approach

must trace all the references before it can determine any object to be

unreachable. But once the reachable set is computed, it can find many unreachable

objects all at once and locate a good deal of free storage at the same time.

Because all the references must be analyzed at the same time, we have an option

to relocate the reachable objects and thereby reduce fragmentation. There are

many different trace-based algorithms, and we discuss the options in Sections

7.6 and 7.7.1.

3. Reference Counting Garbage

Collectors

We now consider a simple, although imperfect, garbage collector, based

on reference counting, which identifies garbage as an object changes from being

reachable to unreachable; the object can be deleted when its count drops to

zero. With a reference-counting garbage collector, every object must have a

field for the reference count. Reference counts can be maintained as follows:

1. Object Allocation. The reference count of the new object is set to 1.

2. Parameter Passing. The reference count of each object passed into a procedure is incremented.

3. Reference Assignments. For

statement u = v, where u and v are refer-ences, the reference count

of the object referred to by v goes

up by one, and the count for the old object referred to by u goes down by one.

Procedure Returns. As a procedure exits, all the

references held by the local

variables of that procedure activation record must also be decre-mented. If

several local variables hold references to the same object, that object's count

must be decremented once for each such reference.

Transitive Loss of

Reachability. Whenever the reference count of an object becomes zero, we must also

decrement the count of each object pointed to by a reference within the object.

Reference counting has two main disadvantages: it cannot collect

unreach-able, cyclic data structures, and it is expensive. Cyclic data

structures are quite plausible; data structures often point back to their

parent nodes, or point to each other as cross references.

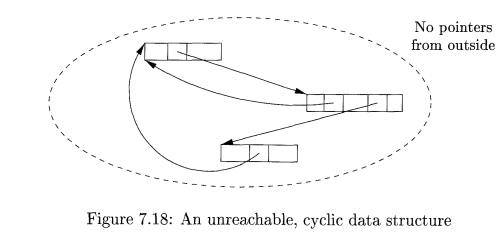

Example 7 . 1 1 : Figure 7.18 shows three objects with references among

them, but no references from anywhere else. If none of these objects is part of

the root set, then they are all garbage, but their reference counts are each

greater than 0. Such a situation is tantamount to a memory leak if we use

reference counting for garbage collection, since then this garbage and any

structures like it are never deallocated.

The overhead of reference counting is high because additional operations

are introduced with each reference assignment, and at procedure entries and

exits. This overhead is proportional to the amount of computation in the

program, and not just to the number of objects in the system. Of particular

concern are the updates made to references in the root set of a program. The

concept of deferred reference counting has been proposed as

a means to eliminate the overhead associated

with updating the reference counts due to local stack accesses. That is,

reference counts do not include references from the root set of the program. An

object is not considered to be garbage until the entire root set is scanned and

no references to the object are found.

The advantage of reference counting, on the other hand, is that garbage

collection is performed in an incremental

fashion. Even though the total overhead can be large, the operations are spread

throughout the mutator's computation.

Although removing one reference may render a large number of objects

un-reachable, the operation of recursively modifying reference counts can

easily be deferred and performed piecemeal across time. Thus, reference

counting is par-ticularly attractive algorithm when timing deadlines must be

met, as well as for interactive applications where long, sudden pauses are

unacceptable. Another advantage is that garbage is collected immediately,

keeping space usage low.

4. Exercises for Section 7.5

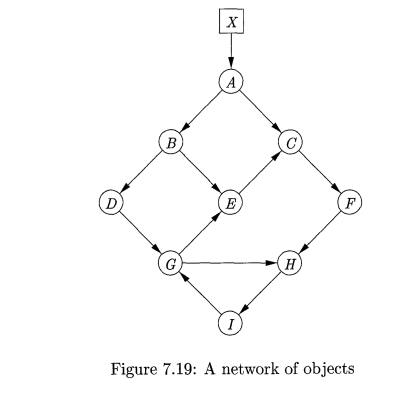

Exercise 7.5.1 : What happens to the reference counts of the

objects in Fig.

if:

The pointer from A to B

is deleted.

The pointer from X to A

is deleted.

The node C is deleted.

Exercise 7 . 5 . 2 : What

happens to reference counts when the pointer from A to D in Fig. 7.20 is

deleted?

Related Topics