Chapter: Operating Systems : Process Scheduling and Synchronization

Threads

THREADS

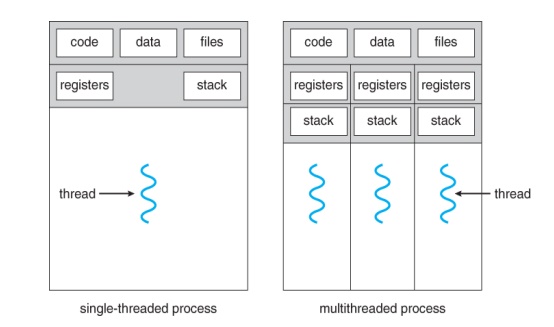

ü A thread

is the basic unit of CPU utilization.

ü It is

sometimes called as a lightweight process.

ü It

consists of a thread ID, a program counter, a register set and a stack.

ü It shares

with other threads belonging to the same process its code section, data

section, and resources such as open files and signals.

ü A

traditional or heavy weight process has a single thread of control.

ü If the

process has multiple threads of control, it can do more than one task at a

time.

1. Benefits of multithreaded

programming

v Responsiveness

v Resource

Sharing

v Economy

v Utilization

of MP Architectures

2. User

thread and Kernel threads

2.1. User threads

·

Supported above the kernel and implemented by a

thread library at the user level.

·

Thread creation , management and scheduling are

done in user space.

·

Fast to create and manage

·

When a user thread performs a blocking system call

,it will cause the entire process to block even if other threads are available

to run within the application.

·

Example: POSIX Pthreads,Mach C-threads and Solaris

2 UI-threads.

2.2.Kernel threads

·

Supported directly by the OS.

·

Thread creation , management and scheduling are

done in kernel space.

·

Slow to create and manage

·

When a kernel thread performs a blocking system

call ,the kernel schedules another thread in the application for execution.

·

Example: Windows NT, Windows 2000 , Solaris 2,BeOS

and Tru64 UNIX support kernel threads.

·

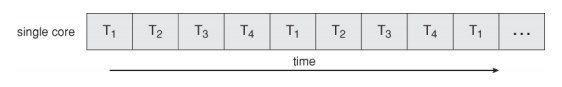

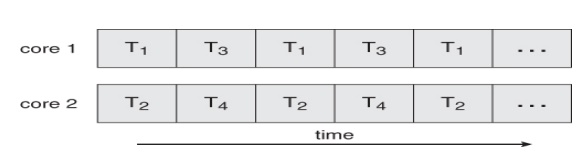

Multicore Programming

A recent

trend in computer architecture is to produce chips with multiple cores,

or CPUs on a single chip.

A

multi-threaded application running on a traditional single-core chip would have

to interleave the threads.

On a

multi-core chip, however, the threads could be spread across the available

cores, allowing true parallel processing.

Concurrent execution on a single-core system.

ü For

operating systems, multi-core chips require new scheduling algorithms to make

better use of the multiple cores available.

ü As

multi-threading becomes more pervasive and more important (thousands instead of

tens of threads), CPUs have been developed to support more simultaneous threads

per core in hardware.

3.1 Programming Challenges

ü For

application programmers, there are five areas where multi-core chips present

new challenges:

1. Identifying

tasks - Examining applications to find activities that can be performed

concurrently.

2. Balance - Finding

tasks to run concurrently that provide equal value. i.e. don't waste a

thread on trivial tasks.

3. Data

splitting - To prevent the threads from interfering with one another.

4. Data

dependency - If one task is dependent upon the results of

another, then the tasks need to be synchronized to assure access in the

proper order.

5. Testing

and debugging - Inherently more difficult in parallel processing

situations, as the race conditions become much more complex and difficult

to identify.

3.2

Types of Parallelism

In

theory there are two different ways to parallelize the workload:

1. Data parallelism divides the data up

amongst multiple cores ( threads ), and performs the same task on each subset of the data. For example

dividing a large image up into pieces and performing the same digital image

processing on each piece on different cores.

2. Task parallelism

divides the different tasks to be

performed among the different cores and performs them simultaneously.

In

practice no program is ever divided up solely by one or the other of these, but

instead by some sort of hybrid combination.

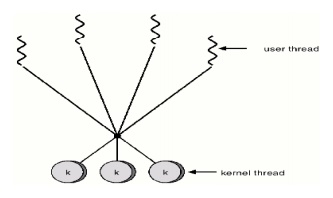

2.5.4 Multithreading

models

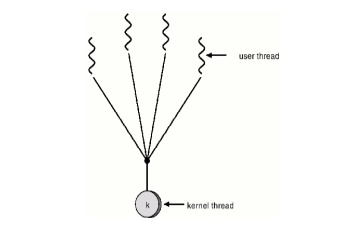

1. Many-to-One

2. One-to-One

3. Many-to-Many

1. Many-to-One:

Many

user-level threads mapped to single kernel thread.

Used on

systems that do not support kernel threads.

2.One-to-One:

Each user-level thread maps to a kernel thread.

ü Examples

- Windows

95/98/NT/2000

- OS/2

3. Many-to-Many

Model:

ü Allows

many user level threads to be mapped to many kernel threads.

ü Allows

the operating system to create a sufficient number of kernel threads.

v Solaris 2

v Windows NT/2000

Related Topics