Chapter: Software Testing : Levels of Testing

System test - The different types

System test - The different types

When

integration tests are completed, a software system has been assembled and its

major subsystems have been tested. At this point the developers/ testers begin

to test it as a whole. System test planning should begin at the requirements

phase with the development of a master test plan and requirements- based (black

box) tests. System test planning is a complicated task. There ar e many

components of the plan that need to be prepared such as test approaches, costs,

schedules, test cases, and test procedures. All of these are examined and

discussed in Chapter 7.

System

testing itself requires a large amount of resources. The goal is to ensure that

the system performs according to its requirements. System test evaluates both

functional behavior and quality requirements such as reliability, usability,

performance and security. This phase of testing is especially useful for

detecting external hardware and software interface defects, for example, those

causing race conditions, deadlocks, problems with interrupts and exception

handling, and ineffective memory usage. After system test the software will be

turned over to users for evaluation during acceptance test or alpha/beta test.

The organization will want to be sure that the quality of the software has been

measured and evaluated before users/clients are invited to use the system. In

fact system test serves as a good rehearsal scenario for acceptance test.

Because

system test often requires many resources, special laboratory equipment, and

long test times, it is usually performed by a team of testers. The best

scenario is for the team to be part of an independent testing group. The team

must do their best to find any weak areas in the software; therefore, it is

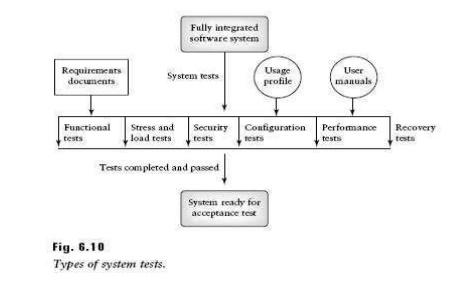

best that no developers are directly involved. There are several types of

system tests as shown on Figure 6.10. The types are as follows:

• Functional

testing

• Performance

testing

• Stress

testing

• Configuration

testing

• Security

testing

• Recovery

testing

Functional Testing

System

functional tests have a great deal of overlap with acceptance tests. Very often

the same test sets can apply to both. Both are demonstrations of the system‘s

functionality. Functional tests at the system level are used to ensure that the

behavior of the system adheres to the requirements specification. All

functional requirements for the system must be achievable by the system. For

example, if a personal finance system is required to allow users to set up

accounts, add, modify, and delete entries in the accounts, and print reports,

the function-based system and acceptance tests must ensure that the system can

perform these tasks. Clients and users will expect this at acceptance test

time. Functional tests are black box in nature. The focus is on the inputs and

proper outputs for each function. Improper and illegal inputs must also be

handled by the system. System behavior under the latter circumstances tests

must be observed. All functions must be tested. In fact, the tests should focus

on the following goals.

• All types

or classes of legal inputs must be accepted by the software.

• All

classes of illegal inputs must be rejected (however, the system should remain

available).

• All

possible classes of system output must exercised and examined.

• All

effective system states and state transitions must be exercised and examined.

• All

functions must be exercised.

Performance Testing

An

examination of a requirements document shows that there are two major types of

requirements:

1. Functional requirements. Users

describe what functions the software should perform. We test for compliance of these requirements at

the system level with the functional-based system tests.

2. Quality requirements. There are

nonfunctional in nature but describe quality levels expected for the software. One example of a quality

requirement is performance level. The users may have objectives for the

software system in terms of memory use, response time, throughput, and delays.

The goal of system performance tests is to see if the software meets the

performance requirements. Testers also learn from performance test whether

there are any hardware or software factors that impact on the system‘s

performance. Performance testing allows testers to tune the system; that is, to

optimize the allocation of system resources. For example, testers may find that

they need to reallocate memory pools, or to modify the priority level of

certain system operations. Testers may also be able to project the system‘s

future performance levels. This is useful for planning subsequent releases.

Performance

objectives must be articulated clearly by the users/clients in the requirements

documents,

and be stated clearly in the system test plan. The objectives must be

quantified. For example, a requirement that the system return a response to a

query in ―a reasonable amount of time‖ is not

an acceptable requirement; the time requirement must be specified in

quantitative way. Results of performance tests are quantifiable. At the end of

the tests the tester will know, for example, the number of CPU cycles used, the

actual response time in seconds (minutes, etc.), he actual number of

transactions processed per time period. These can be evaluated with respect to

requirements objectives.

Stress Testing

When a

system is tested with a load that causes it to allocate its resources in

maximum amounts, this is called stress testing. For example, if an operating

system is required to handle 10 interrupts/second and the load causes 20

interrupts/second, the system is being stressed. The goal of stress test is to

try to break the system; find the circumstances under which it will crash. This

is sometimes called ―breaking the system.‖ An

everyday analogy can be found in the case where a suitcase being tested for

strength and endurance is stomped on by a multiton elephant!

Stress

testing is important because it can reveal defects in real-time and other types

of systems, as well as weak areas where poor design could cause unavailability

of service. For example, system prioritization orders may not be correct,

transaction processing may be poorly designed and waste memory space, and

timing sequences may not be appropriate for the required tasks. This is

particularly important for real-time systems where unpredictable events may

occur resulting in input loads that exceed those described in the requirements

documents. Stress testing often uncovers race conditions, deadlocks, depletion

of resources in unusual or unplanned patterns, and upsets in normal operation

of the software system.

System

limits and threshold values are exercised. Hardware and software interactions

are stretched to the limit. All of these conditions are likely to reveal

defects and design flaws which may not be revealed under normal testing

conditions. Stress testing is supported by many of the resources used for

performance test as shown in Figure 6.11. This includes the load generator. The

testers set the load generator parameters so that load levels cause stress to

the system. For example, in our example of a telecommunication system, the

arrival rate of calls, the length of the calls, the number of misdials, as well

as other system parameters should all be at stress levels. As in the case of

performance test, special equipment and laboratory space may be needed for the

stress tests. Examples are hardware or software probes and event loggers. The

tests may need to run for several days. Planners must insure resources are

available for the long time periods required. The reader should note that

stress tests should also be conducted at the integration, and if applicable at

the unit level, to detect stress-related defects as early as possible in the

testing process. This is especially critical in cases where redesign is needed.

Stress

testing is important from the user/client point of view. When system operate

correctly under conditions of stress then clients have confidence that the

software can perform as required. Beizer suggests that devices used for

monitoring stress situations provide users/clients with visible and tangible

evidence that the system is being stressed.

Configuration Testing

Typical

software systems interact with hardware devices such as disc drives, tape

drives, and printers. Many software systems also interact with multiple CPUs,

some of which are redundant. Software that controls realtime processes, or embedded

software also interfaces with devices, but these are very specialized hardware

items such as missile launchers, and nuclear power device sensors. In many

cases, users require that devices be interchangeable, removable, or

reconfigurable. For example, a printer of type X should be substitutable for a

printer of type Y, CPU A should be removable from a system composed of several

other CPUs, sensor A should be replaceable with sensor B. Very often the

software will have a set of commands, or menus, that allows users to make these

configuration changes. Configuration testing allows developers/testers to

evaluate system performance and availability when hardware exchanges and

reconfigurations occur. Configuration testing also requires many resources

including the multiple hardware devices used for the tests. If a system does

not have specific requirements for device configuration changes then

large-scale configuration testing is not essential.

According

to Beizer configuration testing has the following objectives:

• Show that

all the configuration changing commands and menus work properly.

•

Show that all interchangeable devices are really

interchangeable, and that they each enter the proper states for the specified

conditions.

•

Show that the systems‘ performance level is

maintained when devices are interchanged, or when they fail.

Several

types of operations should be performed during configuration test. Some sample

operations for testers are:

(i) rotate

and permutate the positions of devices to ensure physical/ logical device

permutations work for each device (e.g., if there are two printers A and B,

exchange their positions);

(ii) induce

malfunctions in each device, to see if the system properly handles the

malfunction;

(iii) induce multiple device

malfunctions to see how the system reacts. These operations will help to reveal problems (defects) relating

to hardware/software interactions when hardware exchanges, and reconfigurations

occur. Testers observe the consequences of these operations and determine

whether the system can recover gracefully particularly in the case of a

malfunction.

Security Testing

Designing

and testing software systems to insure that they are safe and secure is a big

issue facing software developers and test specialists. Recently, safety and security

issues have taken on additional importance due to the proliferation of

commercial applications for use on the Internet. If Internet users believe that

their personal information is not secure and is available to those with intent

to do harm, the future of e-commerce is in peril! Security testing evaluates

system characteristics that relate to the availability, integrity, and

confidentially of system data and services. Users/clients should be encouraged

to make sure their security needs are clearly known at requirements time, so

that security issues can be addressed by designers and testers. Computer

software and data can be compromised by:

(i) criminals intent on doing damage,

stealing data and information, causing denial of service, invading privacy;

(ii) errors on the part of honest

developers/maintainers who modify, destroy, or compromise data because of misinformation,

misunderstandings,and/or lack of knowledge.

Both

criminal behavior and errors that do damage can be perpetuated by those inside

and outside of an organization. Attacks can be random or systematic. Damage can

be done through various means such as:

(i) viruses;

(ii)

trojan

horses;

(iii)

trap doors;

(iv)illicit channels.

The

effects of security breaches could be extensive and can cause:

(i) loss of

information;

(ii)

corruption of information;

(iii)misinformation;

(iv)privacy violations;

(v)

denial of service.

Physical,

psychological, and economic harm to persons or property can result from

security breaches. Developers try to ensure the security of their systems through

use of protection mechanisms such as passwords, encryption, virus checkers, and

the detection and elimination of trap doors. Developers should realize that

protection from unwanted entry and other security-oriented matters must be

addressed at design time. A simple case in point relates to the characteristics

of a password. Designers need answers to the following: What is the minimum and

maximum allowed length for the password? Can it be pure alphabetical or must it

be a mixture of alphabetical and other characters? Can it be a dictionary word?

Is the password permanent, or does it expire periodically? Users can specify

their needs in this area in the requirements document. A password checker can

enforce any rules the designers deem necessary to meet security

requirements.

Password

checking and examples of other areas to focus on during security testing are

described below.

Password Checking— Test the

password checker to insure that users will select a password that meets the conditions described in the

password checker specification. Equivalence class partitioning and boundary

value analysis based on the rules and conditions that specify a valid

password

can be used to design the tests.

Legal and Illegal Entry with Passwords—Test for

legal and illegal system/data access via legal and illegal passwords.

Password Expiration—If it is

decided that passwords will expire after a certain time period, tests should be designed to insure the

expiration period is properly supported and that users can enter a

new and

appropriate password.

Encryption— Design test cases to evaluate the

correctness of both encryption and decryption algorithms for systems where data/messages are encoded.

Browsing—Evaluate browsing privileges to

insure that unauthorized browsing does not occur. Testers should attempt to browse illegally and observe system

responses. They should determine what types of private information can be

inferred by both legal and illegal browsing.

Trap Doors—Identify any unprotected entries

into the system that may allow access through unexpected channels (trap doors). Design tests that attempt to

gain illegal entry and observe results. Testers will need the support of

designers and developers for this task. In many cases an external ―tiger team‖ as described below is hired to

attempt such a break into the system.

Viruses—Design tests to insure that

system virus checkers prevent or curtail entry of viruses into the system. Testers may attempt to

infect the system with various viruses and observe the system response. If a

virus does penetrate the system, testers will want to determine what has been

damaged and to what extent.

Even with

the backing of the best intents of the designers, developers/ testers can never

be sure that a software system is totally secure even after extensive security

testing. If security is an especially important issue, as in the case of

network software, then the best approach if resources permit, is to hire a

so-called ―tiger team‖ which is

an outside group of penetration experts who attempt to breach the system

security. Although a testing group in the organization can be involved in

testing for security breaches, the tiger team can attack the problem from a

different point of view. Before the tiger team starts its work the system

should be thoroughly tested at all levels. The testing team should also try to

identify any trap doors and other vulnerable points. Even with the use of a

tiger team there is never any guarantee that the software is totally secure.

Recovery Testing

Recovery

testing subjects a system to losses of resources in order to determine if it

can recover properly from these losses. This type of testing is especially

important for transaction systems, for example, on-line banking software. A

test scenario might be to emulate loss of a device during a transaction. Tests

would determine if the system could return to a wellknown state, and that no

transactions have been compromised. Systems with automated recovery are

designed for this purpose. They usually have multiple CPUs and/or multiple

instances of devices, and mechanisms to detect the failure of a device. They

also have a so-called ―checkpoint‖ system

that meticulously records transactions and system states periodically so that

these are preserved in case of failure. This information allows the system to

return to a known state after the failure. The recovery testers must ensure

that the device monitoring system and the checkpoint software are working

properly.

Beizer

advises that testers focus on the following areas during recovery testing :

1. Restart. The current system state and

transaction states are discarded.The most recent checkpoint record is retrieved and the system initialized to the

states in the checkpoint record. Testers must insure that all transactions have

been reconstructed correctly and that all devices are in the proper state. The

system should then be able to begin to process new transactions.

2. Switchover. The ability of the system to

switch to a new processor must be tested. Switchover is the result of a command or a detection of a faulty processor by a

monitor. In each of these testing

situations

all transactions and processes must be carefully examined to detect:

(i)loss of transactions;

(ii)

merging of transactions;

(iii)incorrect

transactions;

(iv)an unnecessary duplication of a

transaction.

A good

way to expose such problems is to perform recovery testing under a stressful

load. Transaction inaccuracies and system crashes are likely to occur with the

result that defects and design flaws will be revealed.

Related Topics