Chapter: Modern Analytical Chemistry: Uality Assurance

Evaluating Quality Assurance Data: Prescriptive Approach

Evaluating Quality

Assurance Data

In the previous

section we described several internal methods

of quality assessment that provide quantitative estimates of the systematic and random errors

present in an analytical system. Now we turn our attention to how this numerical information is incorporated into the written directives of a complete

quality assurance program. Two approaches to developing quality

assurance programs have been described9: a

prescriptive approach, in which an exact method

of quality assessment is prescribed; and a performance-based approach, in which any form of quality assessment is ac- ceptable, provided

that an acceptable level of statistical control can be demonstrated.

Prescriptive Approach

With a prescriptive approach to quality

assessment, duplicate samples,

blanks, stan- dards, and

spike recoveries are measured following a specific protocol. The result for each analysis is then compared

with a single predetermined limit. If this limit is exceeded, an appropriate corrective action is taken.

Prescriptive approaches to qual-

ity assurance are common for programs and laboratories subject

to federal regula- tion. For example, the Food and Drug Administration (FDA) specifies quality

as- surance practices that must be followed by laboratories analyzing products

regulated by the FDA.

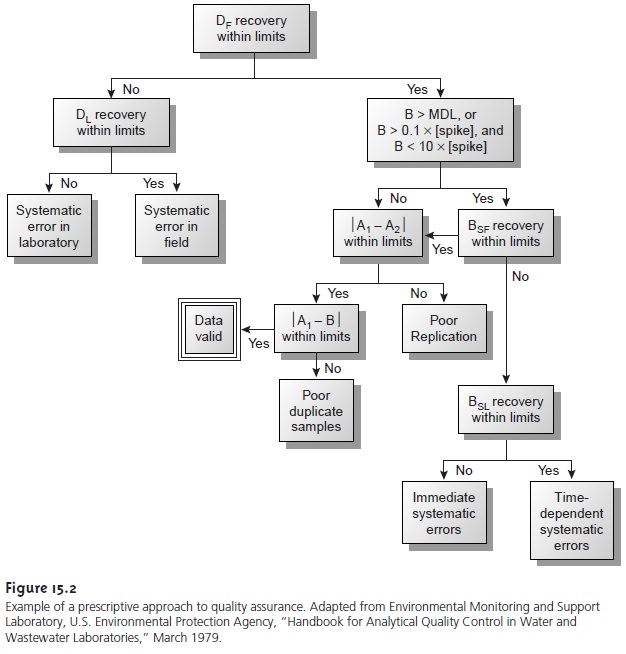

A good example

of a prescriptive approach to quality assessment is the protocol outlined in Figure 15.2,

published by the Environmental Protection Agency (EPA) for laboratories involved

in monitoring studies

of water and wastewater.10 Indepen- dent samples

A and B are collected simultaneously at the sample site.

Sample A is split

into two equal-volume samples, and labeled

A1 and A2. Sample B is also split into two equal-volume samples,

one of which, BSF, is spiked with a known

amount of analyte. A field blank,

DF, also is spiked with the same amount of analyte. All five

samples (A1, A2, B, BSF, and DF) are preserved if necessary and transported to the

laboratory for analysis.

The first sample

to be analyzed is the field blank.

If its spike recovery is unac-

ceptable, indicating that

a systematic error

is present, then

a laboratory method

blank, DL, is prepared and analyzed. If the spike

recovery for the method blank

is also unsatisfactory, then the systematic error originated in the laboratory. An ac- ceptable spike

recovery for the

method blank, however, indicates that the

systematic error occurred in the field or during transport to the laboratory. Systematic errors in the laboratory can be corrected, and the analysis

continued. Any systematic er- rors occurring in the field, however, cast uncertainty on the quality

of the samples, making it necessary

to collect new samples.

If the field blank is satisfactory, then sample B is analyzed. If the result for B is above the method’s detection limit, or if it is within the range of 0.1 to 10 times the amount of analyte spiked into BSF, then a spike recovery for BSF is determined.

An unacceptable spike recovery

for BSF indicates the presence of a systematic error in- volving the

sample. To determine the source of the systematic error, a laboratory spike, BSL, is prepared

using sample B and analyzed.

If the spike recovery for BSL is acceptable, then the systematic error requires a long time to have a noticeable effect on the spike

recovery. One possible explanation is that

the analyte has

not been properly preserved or has been held beyond

the acceptable holding

time. An unac- ceptable spike recovery for BSL suggests

an immediate systematic error, such as that

due to the influence of the sample’s

matrix. In either case, the systematic errors are

fatal and must be corrected before the sample

is reanalyzed.

If the spike

recovery for BSF is acceptable, or if the

result for sample

B is below the method’s detection limit or outside

the range of 0.1 to 10 times

the amount of analyte spiked in BSF, then the duplicate samples A1 and

A2 are analyzed. The results for A1 and A2 are discarded if the difference between their values is excessive. If the difference between

the results for A1 and A2 is within the accepted limits,

then the results for samples A1 and B are compared. Since samples collected

from the same sampling site at the same time should be identical in composition, the results are discarded if the difference between their values

is unsatisfactory, and

accepted if the difference is satisfactory.

This protocol requires

four to five evaluations of quality assessment data before the result

for a single sample can

be accepted; a process that

must be repeated for each analyte and

for each sample.

Other prescriptive protocols are equally demand- ing. For example, Figure 3.7 shows a portion

of the quality assurance

protocol used for the graphite

furnace atomic absorption analysis of trace metals in aqueous solutions. This protocol

involves the analysis

of an initial calibration verifi- cation standard and an initial calibration blank, followed by the analysis

of samples in groups of ten. Each group of samples

is preceded and followed by continuing cal- ibration verification (CCV) and continuing calibration blank (CCB) quality

assess- ment samples. Results

for each group

of ten samples

can be accepted only if both sets of CCV and CCB quality

assessment samples are acceptable.

The advantage to a prescriptive approach to quality

assurance is that a single

con- sistent set of guidelines is used by all laboratories to control the

quality of analytical results. A significant disadvantage, however, is that the ability

of a laboratory to pro- duce quality results is not taken

into account when

determining the frequency of col- lecting and analyzing quality

assessment data. Laboratories with a record of producing high-quality results

are forced to spend more time and money on quality assessment than is perhaps necessary. At the same

time, the frequency of quality assessment may be insufficient for laboratories with

a history of producing results

of poor quality.

Related Topics