Chapter: Distributed Systems : Process & Resource Management

Distributed Shared Memory

DISTRIBUTED SHARED MEMORY

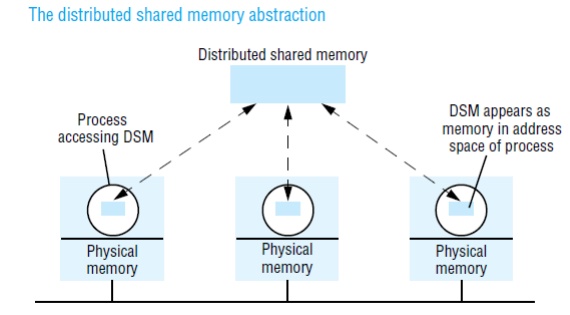

Distributed

shared memory (DSM) is an abstraction used for sharing data between computers

that do not share physical memory. Processes access DSM by reads and updates to

what appears to be ordinary memory within their address space. However, an

underlying runtime system ensures transparently that processes executing at

different computers observe the updates made by one another.

The main

point of DSM is that it spares the programmer the concerns of message passing

when writing applications that might otherwise have to use it. DSM is primarily

a tool for parallel applications or for any distributed application or group of

applications in which individual shared data items can be accessed directly.

DSM is in general less appropriate in client-server systems, where clients

normally view server-held resources as abstract data and access them by request

(for reasons of modularity and protection).

Message

passing cannot be avoided altogether in a distributed system: in the absence of

physically shared memory, the DSM runtime support has to send updates in

messages between computers. DSM systems manage replicated data: each computer

has a local copy of recently accessed data items stored in DSM, for speed of

access.

In

distributed memory multiprocessors and clusters of off-the-shelf computing

components (see Section 6.3), the processors do not share memory but are

connected by a very high-speed network. These systems, like general-purpose

distributed systems, can scale to much greater numbers of processors than a

shared-memory multiprocessor’s 64 or so. A central question that has been

pursued by the DSM and multiprocessor research communities is whether the

investment in knowledge of shared memory algorithms and the associated software

can be directly transferred to a more scalable distributed memory architecture.

Message passing versus DSM

As a

communication mechanism, DSM is comparable with message passing rather than

with request-reply-based communication, since its application to parallel

processing, in particular, entails the use of asynchronous communication. The

DSM and message passing approaches to programming can be contrasted as follows:

Programming model:

Under the

message passing model, variables have to be marshalled from one process,

transmitted and unmarshalled into other variables at the receiving process. By

contrast, with shared memory the processes involved share variables directly,

so no marshalling is necessary – even of pointers to shared variables – and

thus no separate communication operations are necessary.

Efficiency :

Experiments

show that certain parallel programs developed for DSM can be made to perform

about as well as functionally equivalent programs written for message passing

platforms on the same hardware – at least in the case of relatively small numbers

of computers (ten or so). However, this result cannot be generalized. The

performance of a program based on DSM depends upon many factors, as we shall

discuss below – particularly the pattern of data sharing.

Implementation approaches to DSM

Distributed

shared memory is implemented using one or a combination of specialized

hardware, conventional paged virtual memory or middleware:

Hardware:

Shared-memory

multiprocessor architectures based on a NUMA architecture rely on specialized

hardware to provide the processors with a consistent view of shared memory.

They handle memory LOAD and STORE instructions by communicating with remote

memory and cache modules as necessary to store and retrieve data.

Paged

virtual memory:

Many

systems, including Ivy and Mether , implement DSM as a region of virtual memory

occupying the same address range in the address space of every participating

process.

#include "world.h"

struct shared { int a, b; }; Program Writer:

main()

{

struct shared *p;

methersetup(); /* Initialize the Mether runtime */

p = (struct shared *)METHERBASE;

/* overlay structure on METHER segment */ p->a =

p->b = 0; /* initialize fields to zero */

while(TRUE){ /* continuously update structure

fields */ p –>a = p –>a + 1;

p –>b = p –>b - 1;

}

}

Program Reader:

main()

{

struct shared *p; methersetup();

p = (struct shared *)METHERBASE;

while(TRUE){ /* read the fields once every second

*/ printf("a = %d, b = %d\n", p –>a, p –>b);

sleep(1);

}

}

Middleware:

Some

languages such as Orca, support forms of DSM without any hardware or paging

support, in a platform-neutral way. In this type of implementation, sharing is

implemented by communication between instances of the user-level support layer

in clients and servers. Processes make calls to this layer when they access

data items in DSM. The instances of this layer at the different computers

access local data items and communicate as necessary to maintain consistency.

Design and implementation issues

The

synchronization model used to access DSM consistently at the application level;

the DSM consistency model, which governs the consistency of data values

accessed from different computers; the update options for communicating written

values between computers; the granularity of sharing in a DSM implementation;

and the problem of thrashing.

Structure

A DSM

system is just such a replication system. Each application process is presented

with some abstraction of a collection of objects, but in this case the

‘collection’ looks more or less like memory. That is, the objects can be

addressed in some fashion or other. Different approaches to

DSM vary

in what they consider to be an ‘object’ and in how objects are addressed. We

consider three approaches, which view DSM as being composed respectively of

contiguous bytes, language-level objects or immutable data items.

Byte-oriented

This type

of DSM is accessed as ordinary virtual memory – a contiguous array of bytes. It

is the view illustrated above by the Mether system. It is also the view of many

other DSM systems, including Ivy.It allows applications (and language

implementations) to impose whatever data structures they want on the shared

memory. The shared objects are directly addressible memory locations (in

practice, the shared locations may be multi-byte words rather than individual

bytes). The only operations upon those objects are read (or LOAD) and write

(or STORE). If x and y are two memory locations, then we

denote instances of these operations as follows:

Object-oriented

The

shared memory is structured as a collection of language-level objects with

higher-level semantics than simple read

/ write variables, such as stacks and

dictionaries. The contents of the shared memory are changed only by invocations

upon these objects and never by direct access to their member variables. An

advantage of viewing memory in this way is that object semantics can be

utilized when enforcing consistency.

Immutable data

When

reading or taking a tuple from tuple space, a process provides a tuple

specification and the tuple space returns any tuple that matches that

specification – this is a type of associative addressing. To enable processes

to synchronize their activities, the read

and take operations both block until

there is a matching tuple in the tuple space.

Synchronization model

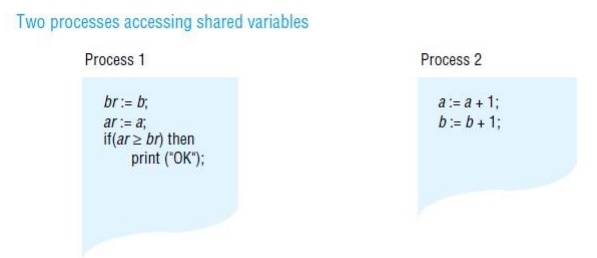

Many

applications apply constraints concerning the values stored in shared memory.

This is as true of applications based on DSM as it is of applications written

for sharedmemory multiprocessors (or indeed for any concurrent programs that

share data, such as operating system kernels and multi-threaded servers). For

example, if a and b are two variables stored in DSM, then

a constraint might be that a=b

always. If two or moreprocesses execute the following code:

a:= a + 1; b := b + 1;

then an

inconsistency may arise. Suppose a

and b are initially zero and that

process 1gets as far as setting a to

1. Before it can increment b, process

2 sets a to 2 and b to 1.

Consistency model

The local

replica manager is implemented by a combination of middleware (the DSM runtime

layer in each process) and the kernel. It is usual for middleware to perform

the majority of DSM processing. Even in a page-based DSM implementation, the

kernel usually provides only basic page mapping, page-fault handling and

communication mechanisms and middleware is responsible for implementing the

page-sharing policies. If DSM segments are persistent, then one or more storage

servers (for example, file servers) will also act as replica managers.

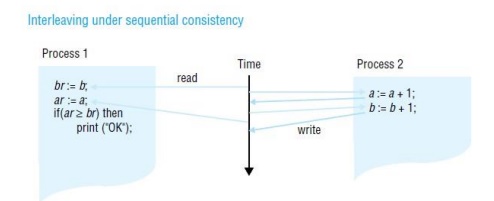

Sequential consistency

A DSM

system is said to be sequentially consistent if for any execution there is some interleaving of the series of

operations issued by all the processes that satisfies the following two

criteria:

SC1: The

interleaved sequence of operations is such that if R(x) a occurs in the

sequence, then either the last write operation that occurs before it in the

interleaved sequence is W(x) a, or no write operation occurs before it and a is the initial value of x.

SC2: The

order of operations in the interleaving is consistent with the program order in

which each individual client executed them.

Coherence

Coherence

is an example of a weaker form of consistency. Under coherence, every process

agrees on the order of write operations to the same location, but they do not

necessarily agree on the ordering of write operations to different locations.

We can think of coherence as sequential consistency on a locationby- location

basis. Coherent DSM can be implemented by taking a protocol for implementing

sequential consistency and applying it separately to each unit of replicated

data – for example, each page.

Weak consistency

This

model exploits knowledge of synchronization operations in order to relax memory

consistency, while appearing to the programmer to implement sequential

consistency (at least, under certain conditions that are beyond the scope of

this book). For example, if the programmer uses a lock to implement a critical

section, then a DSM system can assume that no other process may access the data

items accessed under mutual exclusion within it. It is therefore redundant for

the DSM system to propagate updates to these items until the process leaves the

critical section.

While

items are left with ‘inconsistent’ values some of the time, they are not

accessed at those points; the execution appears to be sequentially consistent.

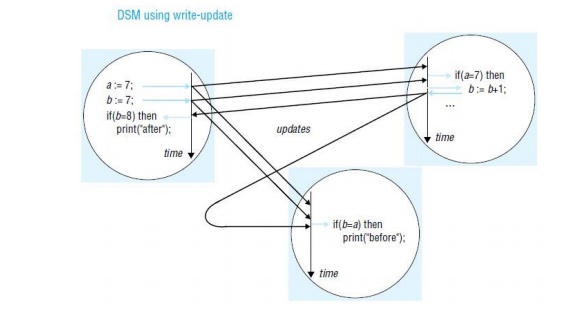

Update options

Two main

implementation choices have been devised for propagating updates made by one

process to the others: write-update and write-invalidate. These are applicable

to a variety of DSM consistency models, including sequential consistency. In

outline, the options are as follows:

Write-update: The updates made by a process

are made locally and multicast to all other replica managers possessing a copy of the data item, which immediately

modify the data read by local processes. Processes read the local copies of

data items, without the need for communication. In addition to allowing

multiple readers, several processes may write the same data item at the same

time; this is known as multiple-reader/multiple-writer sharing.

Write-invalidate: This is commonly implemented in

the form of multiple-reader/ single-writer

sharing. At any time, a data item may either be accessed in read-only mode

by one or more processes, or it may be read and written by a single process. An

item that is currently accessed in read-only mode can be copied indefinitely to

other processes. When a process attempts to write to it, a multicast message is

first sent to all other copies to invalidate them and this is acknowledged

before the write can take place; the other processes are thereby prevented from

reading stale data (that is, data that are not up to date). Any processes

attempting to access the data item are blocked if a writer exists.

Granularity

An issue

that is related to the structure of DSM is the granularity of sharing.

Conceptually, all processes share the entire contents of a DSM. As programs

sharing DSM execute, however, only certain parts of the data are actually

shared and then only for certain times during the execution. It would clearly

be very wasteful for the DSM implementation always to transmit the entire

contents of DSM as processes access and update it.

Thrashing

A

potential problem with write-invalidate protocols is thrashing. Thrashing is

said to occur where the DSM runtime spends an inordinate amount of time

invalidating and transferring shared data compared with the time spent by

application processes doing useful work. It occurs when several processes

compete for the same data item, or for falsely shared data items.

Related Topics