Chapter: Multicore Application Programming For Windows, Linux, and Oracle Solaris : Synchronization and Data Sharing

Data Races

Data

Races

Data races are the most common programming error found in parallel code.

A data race occurs when multiple threads use the same data item and one or more

of those threads are updating it. It is best illustrated by an example. Suppose

you have the code shown in Listing 4.1, where a pointer to an integer variable

is passed in and the function incre-ments the value of this variable by 4.

Listing 4.1 Updating the Value at an Address

void update(int * a)

{

*a = *a + 4;

}

The SPARC disassembly for this code would look something like the code

shown in Listing 4.2.

Listing 4.2 SPARC

Disassembly for Incrementing a Variable Held in Memory

ld [%o0], %o1 // Load *a

add %o1, 4, %o1 //

Add 4

st %o1, [%o0] // Store *a

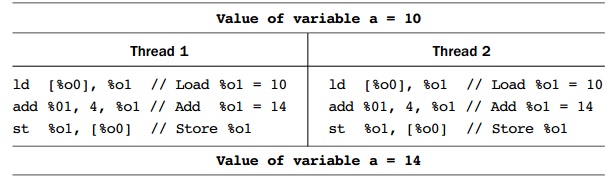

Suppose this code occurs in a multithreaded application and two threads

try to incre-ment the same variable at the same time. Table 4.1 shows the

resulting instruction stream.

Table 4.1 Two

Threads Updating the Same Variable

In the example, each thread adds 4 to the variable, but because they do

it at exactly the same time, the value 14 ends up being stored into the

variable. If the two threads had executed the code at different times, then the

variable would have ended up with the value of 18.

This is

the situation where both threads are running simultaneously. This illustrates a

common kind of data race and possibly the easiest one to visualize.

Another

situation might be when one thread is running, but the other thread has been

context switched off of the processor. Imagine that the first thread has loaded

the value of the variable a and then gets context switched off the processor.

When it eventu-ally runs again, the value of the variable a will have changed, and the final

store of the restored thread will cause the value of the variable a to regress to an old value.

Consider

the situation where one thread holds the value of a variable in a register and

a second thread comes in and modifies this variable in memory while the first

thread is running through its code. The value held in the register is now out

of sync with the value held in memory.

The point

is that a data race situation is created whenever a variable is loaded and

another thread stores a new value to the same variable: One of the threads is

now work-ing with “old” data.

Data

races can be hard to find. Take the previous code example to increment a

vari-able. It might reside in the context of a larger, more complex routine. It

can be hard to identify the sequence of problem instructions just by inspecting

the code. The sequence of instructions causing the data race is only three

long, and it could be located within a whole region of code that could be

hundreds of instructions in length.

Not only

is the problem hard to see from inspection, but the problem would occur only

when both threads happen to be executing the same small region of code. So even

if the data race is readily obvious and can potentially happen every time, it

is quite possi-ble that an application with a data race may run for a long time

before errors are observed. In the example, unless you were printing out every

value of the variable a and

actually saw the variable take the same value twice, the data race would be hard

to detect.

The

potential for data races is part of what makes parallel programming hard. It is

a common error to introduce data races into a code, and it is hard to

determine, by inspection, that one exists. Fortunately, there are tools to

detect data races.

Related Topics