Probability | Maths - Classical Approach | 9th Maths : UNIT 9 : Probability

Chapter: 9th Maths : UNIT 9 : Probability

Classical Approach

Classical Approach

The chance of an event happening when expressed quantitatively is

probability.

For example, An urn contains 4 Red balls and 6 Blue balls. You choose a ball at random from

the urn. What is the probability of choosing a Red ball?

The phrase

‘at random’ assures you that each one of the 10 balls has the same chance (that

is, probability) of getting chosen. You may be blindfolded and the balls may be

mixed up for a “fair” experiment. This makes the outcomes “equally likely”.

The probability

that the Red Ball is chosen is 4/10 (You may also give it as 2/5 or 0.4).

What would be the probability for choosing a Blue ball? It is 6/10 (or 3/5 or 0.6).

Note that the sum of the two probabilities is 1. This means that

no other outcome is possible.

The approach

we adopted in the above example is classical. It is calculating a priori probability. (The Latin phrase a priori means ‘without

investigation or sensory experience’). Note that the above treatment

is possible only when the outcomes are equally likely.

Thinking Corner

If the probability of success of an experiment is 0.4, what is the

probability of failure?

Classical

probability is so named, because it was the first type of probability studied formally

by mathematicians during the 17th and 18th centuries.

Let S be

the set of all equally likely outcomes of a random experiment. (S is called the

sample space for the experiment.)

Let E be

some particular outcome or combination of outcomes of an experiment. (E is called

an event.)

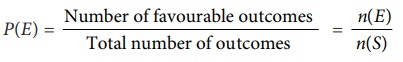

The probability

of an event E is denoted as P(E).

P(E) = Number

of favourable outcomes / Total number of outcomes

= n(E)

/ n(S)

The empirical

approach (relative frequency theory) of probability holds that if an experiment

is repeated for an extremely large number of times and a particular outcome occurs

at a percentage of the time, then that particular percentage is close to the probability

of that outcome.

Related Topics