Chapter: Network Programming and Management : Advanced Sockets

Threads

THREADS

When a process needs something to be performed

by another entity, it forks a child process and lets the child perform

processing. (similar to concurrent server program.) The problem associated with

this are:

a. All descriptors are copied from parent to child

thereby occupying more memory.

b. Inter process communication requires to pass

information between the parent and child after each fork. Returning the

information form the child to parent takes more work.

Threads being a light weight process help to overcome

these drawbacks. However, as they share the same variables – known as global

variables - they need to be synchronized to avoid errors. IN addition to these

variables, threads also share

• Process instructions

• Data,

• Open files

• Signal handlers and signal dispositions

• Current working directory

• User Groups Ids.

Each thread has its own thread ID, set of

registers, program counter and stack pointer, stack, errno, signal mask and

priority.

Basic thread functions: pthread_create

function:

int ptheread_create (pthread_t) *tid, const

pthread_attr_t *attr, void * (*func)

(*void), void *arg );

tid : is the thread ID whose data type is

pthread_t - unsigned integer. ON successful creation of thread, its ID is

returned through the pointer tid.

pthread_atttr_t : Each thread has a number of

attributes – priority, initi8al stack size, whether is demon thread or not. If

this variable is specified, it overrides the default. To accept the default,

attr argument is set to null pointer.

*func : When the thread is created, a function

is specified for it to execute. The thread starts by calling this function and

then terminates either explicitly (by calling pthread_exit) or implicitly by

letting this function to return. The address of the function is specified as

the func argument. And this function is called with a single

pointer argument, arg. If multiple arguments

are to be passed, the address of the structure can be passed

The function takes one argument – a generic

pointer (void *) and returns a generic pointer ( void *). This lets us to pass

one pointer to the thread and return one pointer.

The return is normally 0 if OK or nonzero on an

error

pthread_join function

:

int pthread_join (pthread_t tid, void ** status )

We can wait for a given thread

to terminate by calling pthread_join . We must

specify the tid of the thread that we wish to

wait for. If the status pointer is non null, the return value from the thread

is stored in the location pointed to by status.

pthread_self function

: Each thread has an ID that identifies it within a given process. The thread ID is returned by pthread_create. This

function fetches this value for itself by using this function:

pthread_t pthread_self(void);

pthread_detach function :

A thread

is joinable (the default) or detached. When a joinable thread terminates, its

thread ID and exit status are retained until thread calls pthread_join(). But a detached thread for example daemon thread-

when it terminates all its resources are released and we cannot wait for it

terminate.

When one

thread needs to know when another thread terminates, it is best to leave the

thread joinable.

Int pthread_detach (pthread_t tid);

pthread_exit function:

One way for the thread to terminate is to call pthread_exit().

void pthread_exit (void

*status);

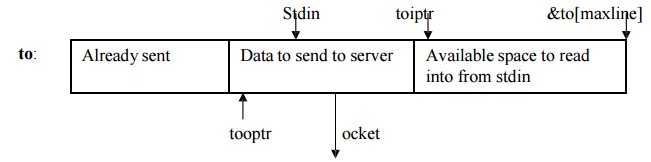

The str_cli function uses fputs and the fgets

to write to and read from the server. While doing so, fgets my be putting the

data from stdin into the buffer

wherein already there is data waiting to be writen. This will block the other processes as the function is waiting to write the

pending data. If there is data to be

read form the server by the function readen at the client, it will be blocked. Similarly, if a line of input is available

from the socket we can block in the

subsequent call to fputs, if the standard

output is slower thant the network. IN such situation non blocking

methods are used. This requires creating elaborate arrangement of buffer management: where

pointer are used to find the data that

is already sent, data that are yet to be sent and available space in the read

buffer. As shown below:

The program comes

to be about 100 lines . This can be reduced by using threads. In this, call to

pthread is given in the main function where in the fgets function are invoked. When the thread returns, fputs is invoked.

Mutexes: Mutual Exclusion

Notice the following Figure1.a that when a

thread terminates, the main loop decrements both nconn and nlefttoread. We

could have placed these two decrements in the function do_get_read, letting

each thread decrement these two counters immediately before the thread

terminates. But this would be a subtle, yet significant, concurrent programming

error.

threads/web01.c

while (nlefttoread > 0) {

while (nconn < maxnconn &&

nlefttoconn > 0) {

/* find a file to read */

for (i = 0; i < nfiles; i++)

if (file[i].f_flags == 0)

break;

if (i == nfiles)

err_quit("nlefttoconn = %d but nothing

found", nlefttoconn);

file[i].f_flags = F_CONNECTING;

Pthread_create(&tid, NULL,

&do_get_read, &file[i]);

file[i].f_tid = tid;

nconn++;

nlefttoconn--;

}

if ( (n = thr_join(0, &tid, (void **)

&fptr)) != 0)

errno = n, err_sys("thr_join error");

nconn--;

nlefttoread--;

printf("thread id %d for %s done\n",

tid, fptr->f_name);

}

exit(0);

}

The problem with placing the code in the

function that each thread executes is that these two variables are global, not

thread-specific. If one thread is in the middle of decrementing a variable,

that thread is suspended, and if another thread executes and decrements the

same variable, an error can result. For example, assume that the C compiler

turns the decrement operator into three instructions: load from memory into a

register, decrement the register, and store from the register into memory. Consider

the following possible scenario:

Thread A is running and it loads the value of

nconn (3) into a register.

The system switches threads from A to B. A's

registers are saved, and B's registers are restored.

Thread B executes the three instructions corresponding

to the C expression nconn--,

Sometime later, the system switches threads

from B to A. A's registers are restored and A continues where it left off, at

the second machine instruction in the three-instructionsequence. The value of

the register is decremented from 3 to 2, and the value of 2 is stored in nconn.

The end result is that nconn is 2 when it

should be 1. This is wrong.

These types of concurrent programming errors

are hard to find for numerous reasons. First, they occur rarely. Nevertheless,

it is an error and it will fail (Murphy's Law). Second, the error is hard to

duplicate since it depends on the nondeterministic timing of many events.

Lastly, on some systems,the hardware instructions might be atomic; that is,

there might be a hardware instruction to decrement an integer in memory

(instead of the three-instruction sequence we assumed above) and the hardware

cannot be interrupted during this instruction. But, this is not guaranteed by

all systems, so the code works on one system but not on another.

We call threads programming concurrent

programming, or parallel programming, since multiple threads can be running

concurrently (in parallel), accessing the same variables. While the error

scenario we just discussed assumes a single-CPU system, the potential for error

also exists if threads A and B are running at the same time on different CPUs

on a multiprocessor system. With normal Unix programming, we do not encounter

these concurrent programming problems because with fork, nothing besides descriptors

is shared between the parent and child. We will, however, encounter this same

type of problem when we discuss shared memory between processes.

We can easily demonstrate this problem with

threads. Figure 2 is a simple program that creates two threads and then has

each thread increment a global variable 5,000 times.

We exacerbate the potential for a problem by

fetching the current value of counter, printing the new value, and then storing

the new value. If we run this program, we have the output shown in Figure 1.b.

Figure 2

Two threads that increment a global variable incorrectly.

threads/example01.c

1

#include "unpthread.h"

2 #define NLOOP 5000

3 int counter; /*

incremented by threads */

4 void *doit(void *);

5 int

6 main(int argc, char **argv)

7 {

8

pthread_t

tidA, tidB;

9 Pthread_create(&tidA, NULL, &doit, NULL);

10

Pthread_create(&tidB,

NULL, &doit, NULL);

11

/* wait

for both threads to terminate */

12

Pthread_join(tidA,

NULL);

13

Pthread_join(tidB,

NULL);

14

exit(0);

15 }

16 void *

17 doit(void *vptr)

18 {

19 int i,

val;

20

/*

21

* Each

thread fetches, prints, and increments the counter NLOOP times.

22

* The

value of the counter should increase monotonically.

23

*/

24

for (i =

0; i < NLOOP; i++) {

25

val =

counter;

26

printf("%d:

%d\n", pthread_self(), val + 1);

27

counter

= val + 1;

28

}

29

return

(NULL);

}

Notice the error the first time the system

switches from thread 4 to thread 5: The value 518 i sstored by each thread.

This happens numerous times through the 10,000 lines of output.

The nondeterministic nature of this type of problem

is also evident if we run the program a few times: Each time, the end result is

different from the previous run of the program. Also, if we redirect the output

to a disk file, sometimes the error does not occur since the program runs

faster, providing fewer opportunities to switch between the threads. The

greatest number of errors occurs when we run the program interactively, writing

the output to the (slow) terminal, but saving the output in a file using the

Unix script program (discussed in detail in Chapter 19 of APUE).

The problem we just discussed, multiple threads

updating a shared variable, is the simplest problem. The solution is to protect

the shared variable with a mutex (which stands for "mutual

exclusion") and access the variable only when we hold the mutex. In terms

of Pthreads, a mutex is a variable of type pthread_mutex_t. We lock and unlock

a mutex using the following two functions:

#include <pthread.h>

int pthread_mutex_lock(pthread_mutex_t * mptr);

int pthread_mutex_unlock(pthread_mutex_t * mptr);

Both return: 0 if OK, positive Exxx value on error

If we try to lock a mutex that is already

locked by some other thread, we are blocked until the mutex is unlocked.

If a mutex variable is statically allocated, we

must initialize it to the constant PTHREAD_MUTEX_INITIALIZER. We will see in

next Section that if we allocate a mutex in shared memory, we must initialize

it at runtime by calling the pthread_mutex_init function.

Some systems (e.g., Solaris) define

PTHREAD_MUTEX_INITIALIZER to be 0, so omitting this initialization is

acceptable, since statically allocated variables are automatically initialized

to 0. But there is no guarantee that this is acceptable and other systems

(e.g., Digital Unix) define the initializer to be nonzero.

Figure3 is a corrected version of Figure 2 that

uses a single mutex to lock the counter between the two threads.

Figure 3

Corrected version of Figure 2 using a mutex to protect the shared variable.

threads/example02.c

1 #include "unpthread.h"

2 #define NLOOP 5000

3 int counter; /*

incremented by threads */

4 pthread_mutex_t counter_mutex =

PTHREAD_MUTEX_INITIALIZER;

5 void *doit(void *);

6 int

7 main(int argc, char **argv)

8 {

9

pthread_t

tidA, tidB;

10

Pthread_create(&tidA,

NULL, &doit, NULL);

11

Pthread_create(&tidB,

NULL, &doit, NULL);

12

/* wait

for both threads to terminate */

13

Pthread_join(tidA,

NULL);

14

Pthread_join(tidB,

NULL);

15

exit(0);

16 }

17 void *

18 doit(void *vptr)

19 {

20 int i,

val;

21

/*

22

* Each

thread fetches, prints, and increments the counter NLOOP times.

23

* The value

of the counter should increase monotonically.

24

*/

25

for (i =

0; i < NLOOP; i++) {

26

Pthread_mutex_lock(&counter_mutex);

27

val =

counter;

28

printf("%d:

%d\n", pthread_self(), val + 1);

29

counter

= val + 1;

30

Pthread_mutex_unlock(&counter_mutex);

31

}

32

return

(NULL);

33 }

We declare a mutex named counter_mutex and this

mutex must be locked by the thread before the thread manipulates the counter

variable. When we run this program, the output is always correct: The value is

incremented monotonically and the final value printed is always 10,000.

How much overhead is involved with mutex

locking? The programs in Figures 2 and 3 were changed to loop 50,000

times and were timed while the output was directed to /dev/null.The difference

in CPU time from the incorrect version with no mutex to the correct version

that used a mutex was 10%. This tells us that mutex locking is not a large

overhead

Related Topics